AI Code Security: What Your Traditional Scanner Misses

Ricardo Argüello — February 21, 2026

CEO & Founder

General summary

Traditional static scanners catch known patterns but can't trace how user input moves through three transformation layers and becomes a dangerous query two components down. AI-powered code analysis reads code like a senior security researcher would, and Claude Code Security found over 500 previously undetected vulnerabilities in production open-source code.

- Static scanners miss context-dependent vulnerabilities that span multiple files and transformation layers

- AI code analysis traces data flows between components and evaluates exploitability in context

- Anthropic's Claude Code Security found 500+ undetected vulnerabilities in production open-source code

- Integrating AI security review into every pull request catches bugs at 1/30th the cost of fixing them in production

- Mid-market companies without dedicated security teams benefit most from automated contextual analysis

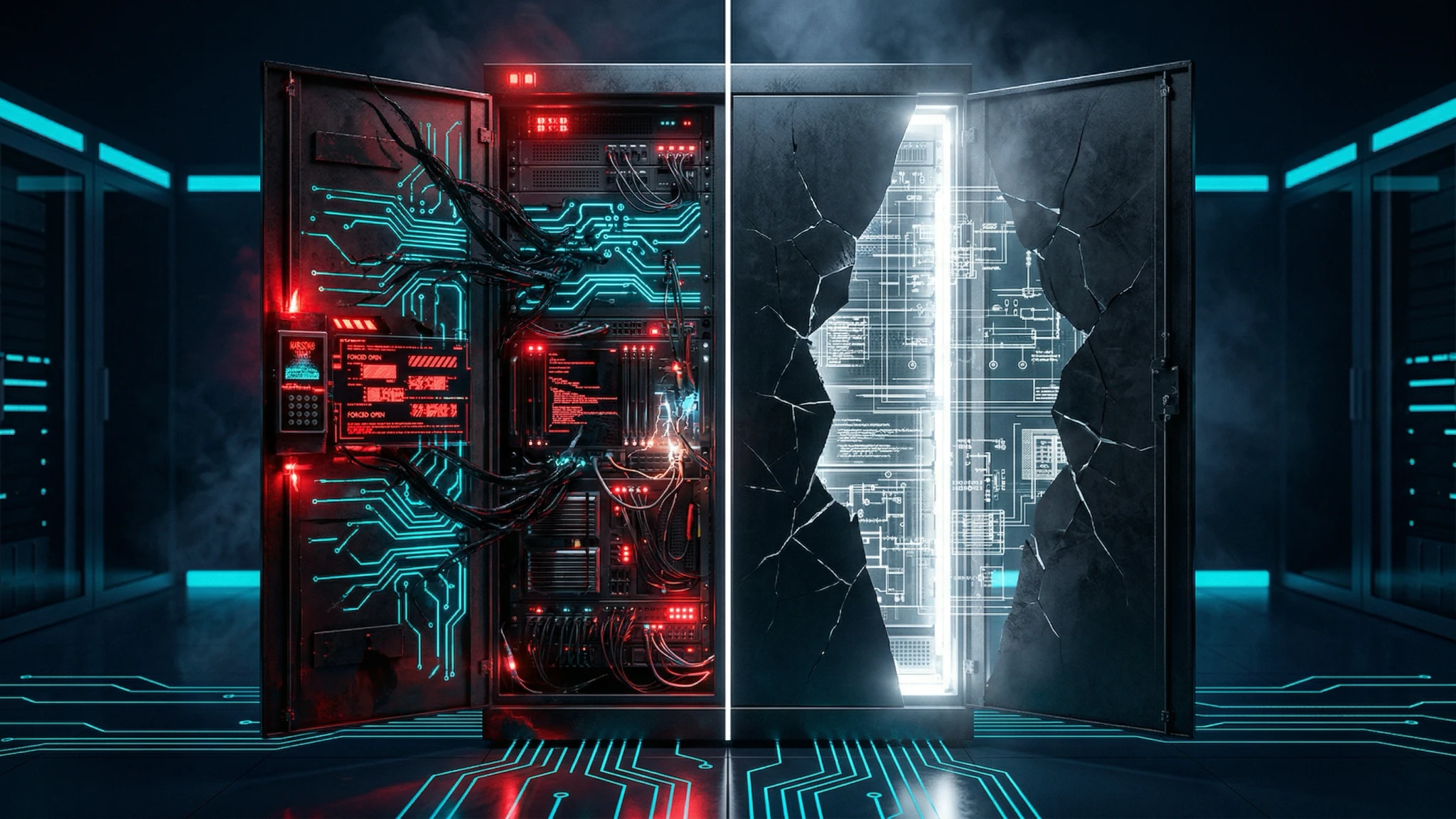

Imagine you have a security guard who checks IDs at the front door but can't follow someone through the building. That's what traditional code scanners do — they spot obvious problems on a single line but miss threats that weave through multiple files. AI-powered analysis is more like a detective who follows the trail from start to finish, catching dangers that slip through the cracks.

AI-generated summary

The Problem With the Scanners You Already Have

Last month, a client asked us to review an application that had been passing their security scanner clean for two years. SonarQube reported no critical vulnerabilities. Snyk showed updated dependencies. The team slept well at night.

Within the first few hours of AI-assisted review, we found a SQL injection that wasn’t obvious. It wasn’t on a single line of code — it was spread across three files. A user parameter entered through an endpoint, passed through a transformation service that partially sanitized it, and ended up in a dynamic query two layers down. No static scanner can follow that flow.

That’s the blind spot. Traditional scanners look for patterns: SELECT * FROM users WHERE id = ${input}. If a vulnerability doesn’t look like a cataloged pattern, it gets a pass.

How AI Reads Code Differently

Anthropic recently published results from Claude Code Security, their AI-powered security analysis tool. The number that matters: they found over 500 previously undetected vulnerabilities in production open-source code. Not in abandoned projects — in code that millions of people rely on daily.

Why does an AI model find what SonarQube, Semgrep, and CodeQL don’t?

The difference is in how they “read” code:

- Static scanner: matches against predefined rules. If a vulnerability doesn’t fit a known pattern, it’s invisible.

- AI analysis: understands code the way a senior security researcher would. It traces data flows between components, grasps the business logic, and evaluates whether a specific flow could be exploited in context.

In practice, this means AI analysis catches entire categories of vulnerabilities that traditional scanners don’t cover:

Authorization Logic Flaws

A scanner can verify that an authentication middleware exists. What it can’t do is check whether permissions are applied consistently across every endpoint — or whether a user with “editor” role could escalate to “admin” through a specific sequence of API calls.

Race Conditions in Financial Operations

What happens if two transfer requests process simultaneously? Rule-based scanners have no way to model concurrent scenarios. AI analysis, on the other hand, can identify operations that lack proper locking mechanisms and flag them before they reach production.

Injections That Cross Abstraction Layers

Modern injections look nothing like the ' OR 1=1 -- of 15 years ago. They pass through ORMs, intermediary services, and multiple data transformations before reassembling as a dangerous query three layers down. No single rule catches that trajectory.

What This Means for Companies Without a Dedicated Security Team

Let’s be direct: most mid-market companies don’t have a dedicated code security team. They have developers doing their best, running a scanner occasionally, and hoping nothing breaks.

It’s not negligence. A senior security engineer costs $150,000-200,000 USD per year. A three-person application security team exceeds half a million. For a 50 or 100-person company, those numbers don’t work.

AI-powered code analysis changes that equation. It doesn’t replace a full security team, but it closes the most dangerous gap: reviewing the code being written every day.

At IQ Source, we build this kind of analysis directly into our development and audit process. When we build software for a client or audit existing code, we don’t rely solely on static scanners. We combine AI-powered analysis with engineers who understand the business context. That’s what makes it possible to find vulnerabilities specific to each application, not just the generic ones.

Building Security Into the Development Cycle

The most effective way to use AI security analysis isn’t as an annual audit. It’s as part of the daily development flow.

At the Pull Request

Every PR gets analyzed before merge. The model reviews changes in context: not just the diff, but how those changes affect existing data flows. If a new endpoint introduces a parameter that eventually reaches a query without proper sanitization, it gets flagged right there.

At Architecture Review

This is where the value compounds. Before implementing a new module, AI analysis evaluates the proposed design against known vulnerability patterns — “If you implement authentication this way, there’s an attack vector when…” — delivering that feedback before a single line of code is written.

In Continuous Integration

Finally, the CI/CD pipeline includes a security analysis step that goes beyond what traditional SAST offers. Rather than matching patterns, it evaluates complete flows and generates reports with enough context for the developer to understand why something is a risk, not just which line causes it.

For companies that already have an enterprise API strategy, the security of the code behind those endpoints is especially critical. A poorly secured API is an open invitation.

The Real Cost of Not Reviewing Your Code

The statistic that gets executive attention: according to IBM Security, the average cost of a data breach in 2025 was $4.88 million USD. For mid-market companies, a single breach can be an existential threat.

But the cost isn’t always a massive breach. Sometimes an enterprise client discovers the vulnerability during their own audit and cancels the contract. Other times a compliance process fails because the code doesn’t meet SOC 2 or ISO 27001 standards. And in the worst cases, a late-discovered vulnerability forces months of rework — refactoring components that are already in production.

Fixing a vulnerability during development costs 5x to 30x less than fixing it in production. AI analysis makes it possible to find it at that early stage.

Getting Started Without Overbuilding

You don’t need to hire a CISO or deploy a $200,000 platform to improve your code security. A practical approach:

Start with your critical code. Identify the modules that handle authentication, payments, personal data, and authorization logic. These are where the highest-impact vulnerabilities hide.

Then integrate analysis into the pipeline. Add an AI-powered security review step to your CI/CD. It doesn’t have to block deploys initially — it can start as advisory.

Once you’re seeing results, establish the process around it: who reviews findings, how they’re prioritized, and what the fix SLA is by severity.

If your company is in the process of modernizing legacy systems, this is the ideal moment to bake AI-powered security analysis into the new architecture from the design phase.

At IQ Source, we design these processes for companies that need enterprise-grade security without the cost of a full internal team. If your code hasn’t gone through an AI-powered security review, that’s the first step: schedule a conversation and we’ll show you what we’d find in your most critical modules before you commit to anything.

Frequently Asked Questions

Effective audits combine traditional static analysis with AI-powered contextual review. Standard scanners catch known patterns, but AI-generated code introduces subtle business logic vulnerabilities that only surface when analyzing complete data flows. An effective audit requires both analysis layers working together.

AI analysis catches context-dependent vulnerabilities that involve multiple interacting components: SQL injections that pass through several transformation layers, authorization logic flaws where permissions are inconsistently checked, and race conditions in concurrent operations that rule-based scanners simply cannot follow.

A focused code audit on a specific module or microservice typically costs $3,000 to $8,000 USD. Full audits of enterprise applications with multiple services range from $15,000 to $50,000 USD, depending on codebase size and integration complexity.

Yes. The analysis integrates directly into CI/CD pipelines to review every pull request before merge. This catches vulnerabilities at development time rather than weeks later in a separate audit. Fixing a bug at this stage costs up to 30 times less than fixing it in production.

Related Articles

npm's Worst Day: One Attack, One Leak, Zero Trust

Axios was hijacked to deploy a RAT. Claude Code's source leaked via source maps. Same registry, same day — two failure modes your team needs to understand.

Your Code Review Was Built for Humans. 41% of Code Isn't

41% of code shipped in 2025 was AI-generated, with a 1.7x higher defect rate. Your review process assumes the author understands the code. That's over.