Enterprise API Strategy: Making AI Integration Work

Ricardo Argüello — February 19, 2026

CEO & Founder

General summary

Gartner estimates 30% of AI projects get abandoned after proof of concept, and the culprit is almost never the model — it's the integration layer. A well-designed enterprise API strategy with a gateway, integration services, MCP servers, and a proper data layer is what makes AI work in production.

- The #1 reason AI projects fail after POC isn't the model — it's the inability to connect to the systems where real data lives

- A proper integration layer has four components: API gateway, integration services, MCP servers, and a data layer

- MCP (Model Context Protocol) acts as a 'universal USB' for AI, replacing fragile custom integrations with a standard interface

- API wrapping, event-driven adapters, and MCP servers let you integrate AI with legacy systems without replacing them

- A basic API gateway costs $5K-$15K; full AI integration layer runs $25K-$100K depending on system count and complexity

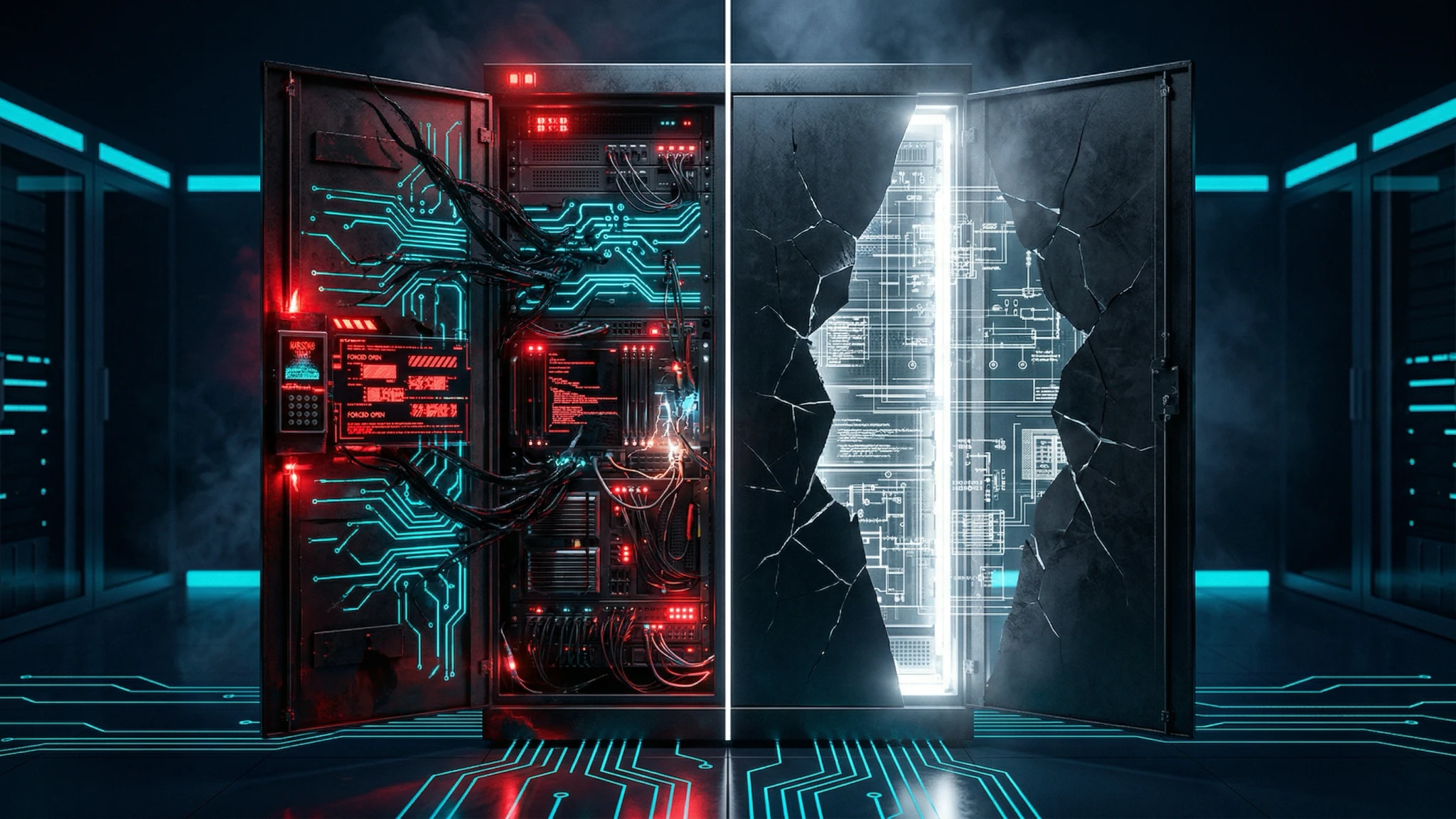

Imagine you buy the best power drill in the world, but none of your drill bits fit it, and you don't have an adapter. The drill is impressive, but useless for actual work. That's what happens when companies buy advanced AI models without building the connection layer to their existing systems — the AI can't reach the data it needs, so the project dies after the demo.

AI-generated summary

Integration Is the Real Bottleneck for Enterprise AI

There’s an uncomfortable truth in the enterprise AI space: according to Gartner’s estimates, 30% of generative AI projects are abandoned after proof of concept — and in most cases, the model isn’t the problem. They fail because they can’t connect to the systems where data lives and where processes are executed.

You can have the most advanced AI model in the world — whether it’s Claude Opus 4.6 or any other — but if it can’t access your ERP, query your CRM, or write to your procurement system, its practical value is close to zero.

This is the reality that AI demos never show. In the demo, the model works with clean data in a controlled environment. In production, it needs to work through a web of heterogeneous systems, inconsistent APIs, legacy databases, and layered security policies.

The integration layer — your APIs — is what makes AI actually work. And for most companies, this layer simply doesn’t exist or is inadequate.

What Is the Anatomy of an Enterprise Integration Layer for AI?

A well-designed integration layer for enterprise AI has four main components:

1. API Gateway: The Unified Entry Point

The API Gateway acts as the single entry point for all communications between AI and enterprise systems. Its functions include:

- Authentication and authorization: verifying each request comes from an authorized source

- Rate limiting: controlling request volume to protect backend systems

- Data transformation: converting formats between different systems

- Logging and monitoring: recording every interaction for auditing and debugging

- Intelligent routing: directing requests to the correct service based on context

2. Integration Services: The Translators

Integration services are the translation layer between the AI world and the enterprise systems world. They convert AI agent requests into operations that legacy systems can understand, and vice versa.

For example:

- An agent says: “I need customer X’s purchase history for the last 12 months”

- The integration service translates this into: a specific SQL query to the ERP, a filter by date and customer, and a response format the agent can process

3. MCP Servers: The Emerging Standard

The Model Context Protocol (MCP) is fundamentally changing how AI models connect with external systems. Think of MCP as a “universal USB” for AI: instead of creating custom connectors for each system, MCP provides a standard that any AI model can use to access any system that implements the protocol.

MCP servers offer:

- Standardized interface: any compatible AI model can use the same connector

- Capability discovery: the model can know what operations are available

- Built-in security: granular access control per operation

- Persistent context: the model maintains state between interactions

4. Data Layer: The Source of Truth

The data layer manages how AI accesses and modifies information in enterprise systems:

- Data warehouses: for analytical queries and reports

- Caches: for frequently accessed data that doesn’t need to be real-time

- Event streams: for constantly changing data that needs real-time processing

- Data lakes: for unstructured data like documents, emails, and files

Design APIs That Work for AI

APIs designed for human consumption (web interfaces, mobile apps) have different requirements than APIs designed for AI agents. Here are the key principles:

Principle 1: Rich Context in Responses

A human using a web interface can interpret ambiguous data. An AI agent needs explicit context in every response:

- Include metadata: data types, units, valid ranges

- Provide relationships: links to related entities

- Add semantics: describe what each field means, not just its value

Principle 2: Atomic and Composite Operations

AI agents need both granular operations (reading a specific field) and composite operations (executing a complete approval workflow):

- Granular APIs for specific queries and point updates

- Orchestration APIs for multi-step workflows

- Batch APIs for bulk operations (invoice processing, inventory updates)

Principle 3: Reliable Error Handling

When a human sees an error, they can interpret the context and make a decision. An AI agent needs actionable errors:

- Specific error codes: not just “400 Bad Request” but “BUDGET_EXCEEDED” or “SUPPLIER_NOT_APPROVED”

- Suggested actions: “retry after 5 seconds” or “escalate to purchasing manager”

- Error context: what was attempted, why it failed, and what options exist

Principle 4: Versioning and Compatibility

AI systems need API stability because unexpected changes can cause cascading errors:

- Semantic versioning: v1, v2, v3 with deprecation notices

- Backward compatibility: new versions don’t break existing integrations

- Living documentation: OpenAPI specifications updated automatically

Principle 5: Observability

You need to know exactly what AI is doing with your systems at all times:

- Distributed tracing: following a request from the agent to the backend system

- Performance metrics: latency, throughput, error rate per API

- Intelligent alerts: notifications when usage patterns are anomalous

Connecting AI with Legacy Systems

This is the question we hear most from companies with 10, 15, or 20 years of accumulated systems. The answer isn’t “replace everything” — that’s expensive, risky, and generally unnecessary.

At IQ Source, we’ve found that four patterns cover the vast majority of legacy integration scenarios:

Pattern 1: API Wrapping

What it is: Creating a modern API that wraps legacy system functions.

How it works: An intermediary service exposes REST or GraphQL endpoints that internally call the legacy system — whether through its existing API (however basic), its direct database, or even its interface automation.

Best for: Systems with stable functionality that aren’t going to change soon.

Real example: A 15-year-old ERP with a SOAP interface is wrapped in a modern REST API that AI agents can consume directly.

Pattern 2: Event-Driven Adapters

What it is: Capturing events from the legacy system and publishing them to a modern event bus.

How it works: An adapter monitors changes in the legacy system (new records, updates, alerts) and publishes them as events that AI agents can consume in real time.

Best for: Systems where you need to react to changes in real time.

Real example: When an invoice is registered in the legacy accounting system, an event is automatically published and an AI agent processes it for reconciliation.

Pattern 3: Database Sync

What it is: Synchronizing data from the legacy system to a modern database that AI can query directly.

How it works: An ETL (Extract, Transform, Load) process copies data from the legacy system to a modern data warehouse periodically or in real time.

Best for: Scenarios where AI only needs to read data, not modify the legacy system.

Real example: The last 5 years of sales data are synced nightly to a warehouse where AI agents run predictive analytics.

Pattern 4: MCP Server Wrapper

What it is: Creating an MCP server that exposes legacy system capabilities as tools that any AI model can use.

How it works: The MCP server defines available operations, their parameters, and their results. Any MCP-compatible AI model can automatically discover and use these operations.

Best for: When you want multiple AI models or agents to access the legacy system in a standardized way.

Real example: An MCP server exposes operations like “query_inventory”, “create_purchase_order”, and “verify_customer_credit” that any agent can use without knowing the legacy’s internal implementation.

Reference Architecture for Enterprise AI

Based on successful implementations, this is the reference architecture we recommend:

Layer 1: AI Agents

- Specialized agents by domain (procurement, sales, support)

- Orchestrator coordinating multiple agents

- Rules engine for enterprise policies

Layer 2: Integration

- API Gateway with authentication and rate limiting

- MCP servers for each enterprise system

- Event bus for asynchronous communication

- Distributed cache for performance

Layer 3: Enterprise Systems

- ERP (SAP, Oracle, Microsoft Dynamics)

- CRM (Salesforce, HubSpot)

- Procurement and purchasing systems

- Accounting and financial systems

- Productivity tools (Google Workspace, Microsoft 365)

Layer 4: Data

- Data warehouse for analytics

- Data lake for unstructured data

- Event store for auditing

- Vector database for semantic search

Measuring the Success of Your API Strategy

Key metrics for evaluating your integration layer:

Performance Metrics

- P95 Latency: 95% of requests should complete in under 500ms

- Availability: 99.9% minimum uptime for critical APIs

- Throughput: ability to handle peak transaction volume with margin

Adoption Metrics

- System coverage: percentage of enterprise systems accessible via API

- Agent usage: number of operations executed by AI agents per day

- Success rate: percentage of operations completed without errors

Business Metrics

- Integration time: how long it takes to connect a new system or agent

- Cost per transaction: compared to the previous manual process

- Implementation velocity: time to deploy new AI use cases

Common API Strategy Mistakes — and How to Avoid Them

Treating APIs as an afterthought. Building APIs at the end, after implementing systems, instead of designing them as a core part of the architecture. This leads to inconsistent APIs, incomplete documentation, and high maintenance costs. If you’re planning an AI initiative, start with your API layer, not the model.

Not designing for AI consumers. Reusing APIs built for web interfaces without adapting them for AI agent consumption. The result is responses with insufficient context, generic error handling, and suboptimal performance. AI agents need richer metadata, explicit semantics, and actionable error messages — different from what a human-facing UI requires.

Underestimating security for autonomous agents. Existing security controls are rarely sufficient for AI agents that operate without human oversight on every request. In our experience, this is one of the most overlooked risks: unauthorized access, unintended actions, and compliance violations become much more likely when an agent can make hundreds of API calls per minute.

Over-engineering from day one is another trap. Building a full integration platform before you have a single AI use case in production almost guarantees budget exhaustion before you see results. Start with the minimum viable integration for your first use case, then expand.

Skipping monitoring and observability. Deploying APIs without proper tracing, metrics, and alerting means problems get discovered when users — or the AI — report errors instead of being detected early. Observability is not optional for AI-driven systems.

How Much Should a Company Invest in Its Integration Layer?

As a rule of thumb, integration investment should represent 20-30% of the total AI project budget. If you’re investing $100,000 in an AI project and $0 in integration, the project will almost certainly fail.

Recommended distribution:

- 30% on API design and implementation

- 25% on connectors and adapters for legacy systems

- 20% on security and governance

- 15% on monitoring and observability

- 10% on documentation and onboarding

To estimate the return on investment of modernizing your integration layer, use our ROI calculator which includes specific models for integration projects.

The Key Takeaway

If your company is evaluating AI or already has projects underway that aren’t generating expected results, the problem is likely in the integration — not the model, not the data, not the team. The integration layer is the unglamorous piece that turns impressive demos into working systems.

A practical starting point: audit how many of your enterprise systems have modern, well-documented APIs today. That number tells you more about your AI readiness than any vendor assessment will.

At IQ Source, we design integration layers, MCP servers, and APIs that connect legacy systems with AI agents. If you want a technical evaluation of your current infrastructure, get in touch — we can map your systems, identify integration bottlenecks, and propose a concrete implementation path.

Frequently Asked Questions

In our experience, the majority of AI projects fail not because of problems with the AI model, but due to integration failures with existing systems. Poorly designed APIs, data fragmented in silos, and legacy systems without modern interfaces are the primary causes.

MCP is an open protocol that standardizes how AI models connect with enterprise systems. It works like a 'universal USB' for AI: instead of creating custom integrations for each system, MCP provides a standard that simplifies and accelerates connecting AI with ERPs, CRMs, databases, and other systems.

An API strategy can be implemented incrementally. A basic API gateway can cost $5,000-15,000 USD. Complete modernization with an AI integration layer typically ranges from $25,000 to $100,000 USD, depending on the number of systems and integration complexity.

Yes. Through patterns like API wrapping, event-driven adapters, and MCP servers, it's possible to create modern integration layers over legacy systems without modifying the original system. This allows AI to access legacy data and functions securely and in a standardized way.

Related Articles

npm's Worst Day: One Attack, One Leak, Zero Trust

Axios was hijacked to deploy a RAT. Claude Code's source leaked via source maps. Same registry, same day — two failure modes your team needs to understand.

Your Code Review Was Built for Humans. 41% of Code Isn't

41% of code shipped in 2025 was AI-generated, with a 1.7x higher defect rate. Your review process assumes the author understands the code. That's over.