The runtime is a commodity now. The moat is the workflow.

Ricardo Argüello — April 23, 2026

CEO & Founder

General summary

Anthropic shipped Claude Managed Agents on April 8 at $0.08 per session-hour, with sandboxing, session state, credential vault, tool orchestration, and observability bundled in. The same month, McKinsey reported that 78% of companies claim to use AI while 80%-plus still see no measurable impact on earnings. Read together, the two data points say one thing: the agent runtime is a commodity and the moat moved to workflow design.

- Claude Managed Agents charges $0.08 per runtime hour, billed to the millisecond, on the same infrastructure layer where 30 to 50 agent startups raised $10M to $100M in 2024 and 2025

- The bundle includes isolated sandboxes, session persistence, credential handling, tool orchestration, and observability, per InfoQ and SiliconANGLE coverage on April 8

- McKinsey reported the same month that 78% of firms say they use AI and more than 80% still see no bottom-line impact, because they add tools without redesigning the workflow

- Radhika Menon of NTT DATA at launch: 'at 8 cents per session hour, you go from idea to production in days, not months.' The differentiation window on infra collapsed

- If the runtime costs the same for every competitor, the only layer left that is yours is how you design the workflow running on top. That is product capability, not infra debt

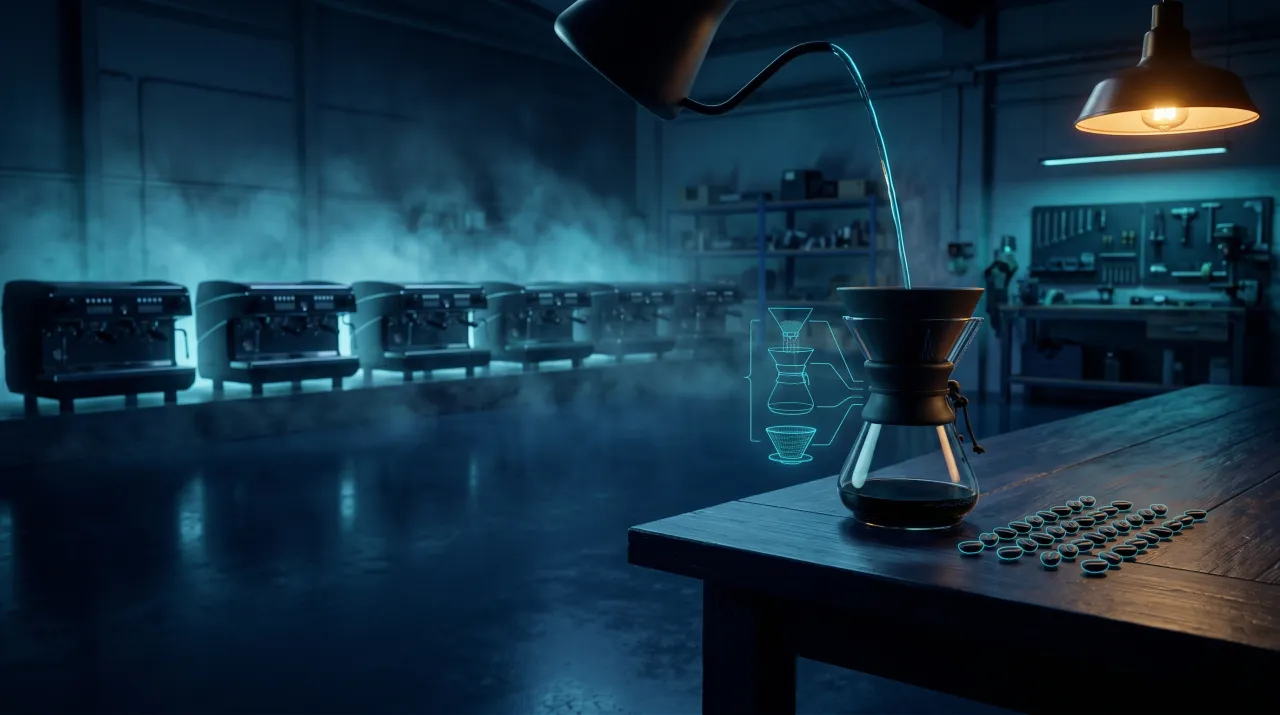

Imagine you opened a coffee shop last year and the thing that set you apart was your $15,000 espresso machine. This week the chain next door drops the same machine, same model, in every location at the same price. Your edge cannot be the machine anymore. It has to be the bean you source, the way the barista is trained, and the menu you put in front of the customer. That is what just happened with AI agents. Anthropic made the standard machine available to everyone at $0.08 an hour. What is yours is the recipe, not the stove.

AI-generated summary

On April 8, Anthropic priced something that, until the week before, was the central thesis of between 30 and 50 agent startups. It put it at $0.08 per session-hour and called it Claude Managed Agents. InfoQ summarized the bundle cleanly: sandbox, session state, credential handling, tool orchestration, observability. All behind one API. All at the same price for Notion, for Rakuten, for Asana, and for your company.

Eight cents an hour, metered to the millisecond. Idle time does not count. SiliconANGLE walked through the math in their launch piece: a one-hour session on Opus 4.6, 50K input tokens, 15K output tokens, total cost of $0.705.

What got erased in four weeks is the investment thesis of an entire cohort of infra startups. If your 2024 pitch deck said “we solve the hard part of running agents in production for enterprises,” this week that deck is history. Not because the work was bad. Because the reference price just got set by the model vendor, and you cannot compete with it without subsidizing every customer.

The layer that was supposed to be a business

The promise of “agent infrastructure as a service” was built on five concrete pieces. Each one was a real problem in 2024. Each one was sold as a separate business. Each one just got packed into a single SKU:

- Sandbox. Ephemeral containers where the agent runs code without touching your prod. Two quarters ago that was a technical pitch involving Firecracker, gVisor, and a thousand isolation details. Today it is a primitive in the API.

- Session persistence. What happens when the agent crashes in the middle of a six-hour task? What happens when the user returns on Monday? Startups solved this with storage, checkpoints, and replay. Now the state lives in the service.

- Credential vault. How do you grant the agent access to your Salesforce, Gmail, or Stripe without pasting the secret in the prompt? WorkOS and several others pushed on this problem for eighteen months. Anthropic now bundles it.

- Tool orchestration. The logic that decides whether the model should hit web search, your internal API, or hand the case off to a human. LangChain, LlamaIndex, CrewAI still exist and are still useful, but they no longer mark the difference between “runs on a laptop” and “runs in production.”

- Agent observability. Structured logs, traces, session replay, visual debug. Arize, LangSmith, Helicone. The managed service gives you this in the same price tag.

Radhika Menon of NTT DATA summarized it in the InfoQ piece with a line every CEO understands: “at 8 cents per session hour, you go from idea to production in days, not months.” That line is the point. It is not that the infra got better; it is that the time your competitor needed to stand it up just collapsed.

The other half of the story came from McKinsey

On April 2, before Anthropic’s launch, McKinsey published “The agentic organization: Contours of the next paradigm for the AI era”. The headline figure is uncomfortable: 78% of surveyed companies say they are using AI, and more than 80% still see no measurable impact on operating profit.

The paper is blunt about why. It is not that AI does not work. It is that most companies treat it as a tool that gets plugged into the existing flow with a single button. That flow was designed five or ten years ago for humans doing every step. AI adds speed to one step, and the bottleneck shifts to the next. The P&L outcome is net zero or worse: license costs that do not reduce headcount because the workflow stays the same.

McKinsey put it in a line leaders should print out: “winners will not simply adopt AI tools; they will boldly rewire workflows to be AI-first.” Seventy-five percent of current roles need reshaping. Not a skills upgrade; reshaping.

The two announcements of the month read as one story. Anthropic removes the excuse that workflow redesign is impossible because the infra is too expensive. McKinsey removes the excuse that buying AI is already the answer.

Why workflow design becomes the moat

“Moat” gets overused. Most of what companies call a moat is a one- or two-year lead time. What lasts ten years is one of three things: a network, a brand, or codified knowledge the competitor cannot reproduce cheaply. Workflow design fits the third one.

Three specific reasons, not generic ones.

Start with where your business context actually lives. A credit approval flow at a credit union runs on regulator rules, on the delinquency history of that specific portfolio, and on fifteen years of committee decisions about edge cases nobody wrote down. None of that context is inside Claude or Anthropic. It only gets codified when someone sits down to design the workflow. If that workflow lives inside a generic agent that your competitor can also buy for $0.08 an hour, you lost the one asset that was defensible.

Then there is the refactor cost. Claude Sonnet 4.5 shipped in October 2025, Opus 4.6 in January, Opus 4.7 in March. With every release, prompt performance shifts, tool selection rules get rewritten, and the drift pattern looks different. A well-designed workflow absorbs five of those generational changes without the operation breaking. The duct-taped pile of prompts and scripts most teams have today cracks at every upgrade. That ability to absorb change is expensive to build, and easy to copy only if the competitor sees it, which they do not.

The third reason is about who can deliver it. Anthropic is not going to fly to San José or Bogotá to understand why your operations team runs bank reconciliation at six in the morning instead of midday. The runtime scaffolding and the base model will not solve that either. It gets solved by someone who sits with the team, understands the use case, models it into a workflow, connects it to the commodity runtime now priced at eight cents, and iterates when the next Opus arrives.

What we do at IQ Source about this new split

For this shift we have two pieces that complement each other.

The first is AI Maestro, the discovery line. Before we propose an agent, a runtime, or an architecture, we sit with the client team and map the real work (not the wiki version, the real one) to identify where AI belongs and where it does not. This is exactly the step McKinsey says 80% of companies skip. Walking out of that step with a written map of the workflow to redesign changes the spend committee conversation completely.

The second piece is Technology Partner, which the arrival of commodity agent runtime just put under a spotlight. There are software companies with deep domain focus (industrial automation, AR EdTech, vertical fintech platforms in LatAm) that do not want to turn their internal team into specialists on agents, runtimes, sandboxes, and observability. That knowledge gets purchased by the hour, the same way they buy AWS or Cloudflare today. What they want to keep inside is the product design and the workflow that runs on top.

That is where we come in. Not as staff augmentation writing code by the hour. As co-designers of the workflow. We start by mapping where AI really belongs inside the client’s processes. Then we draw what should and should not get automated. The last piece is the implementation on a commodity runtime, set up so three parties (client, us, the model) can operate it without one becoming a bottleneck for the others.

The position gets clearer when a “build versus buy” decision is on the table. Until recently, a big chunk of the “build” case was that the internal team could control the infra and the quality of the agents. That argument does not have the same weight now. The new “build” case is that the internal team can redesign the workflow using business knowledge no competitor has access to. Getting there without spending two years learning the runtime stack is why you hire a partner that already did.

What to do in the next two weeks

If you are on the buyer side, three conversations are worth having before the end of the month.

Start with your infra team or with the integrator who sold you agents last year. Pull the contracts and compare what you pay today for sandbox, observability, and orchestration against Anthropic’s list price. If the spread is above 3x for a standard use case, you have an immediate renegotiation case on your hands, or it is time to plan a migration.

Next, bring it to your CFO. The $0.08 per hour is not a fixed cost; it is variable usage. That changes how the internal AI business case gets presented. Cases that used to fail the filter because of high fixed costs now fit. And cases tied to the old expensive vendor just gained visibility at the spend committee.

Finally, and this is the one most companies skip, find whoever owns workflow redesign inside your company. If the answer to “who owns it” is “no one in particular,” that is the real bottleneck. Not the runtime.

That third conversation is where we can help without a big upfront commitment. It is the first step of AI Maestro: pick one candidate workflow, we sit with your team for two hours and draw what becomes an agent on Managed Agents and what stays as traditional code or human decision. No quote attached. If the map is solid, we talk format afterward. The email is the usual: info@iqsource.ai.

Frequently Asked Questions

Claude Managed Agents runtime is priced at $0.08 per session-hour, billed to the millisecond, and the meter only runs while the agent is actively working; idle time is not charged. That price sits on top of standard Claude token usage and tool costs, such as web search at $10 per 1,000 queries. Anthropic launched the service on April 8, 2026.

Claude Managed Agents bundles isolated container provisioning (sandboxing), session state and credential management, tool orchestration, error recovery, and observability. Developers access the whole stack through the Anthropic API without building scaffolding. Notion, Rakuten, and Asana were listed among the first customers in InfoQ coverage on April 21, 2026.

McKinsey reported in 'The agentic organization: Contours of the next paradigm for the AI era' that 78% of firms say they are using AI while more than 80% still see no measurable bottom-line impact. The conclusion is that value is not captured by adding tools but by fully redesigning the workflow, and that 75% of current roles will need reshaping.

When the underlying infrastructure costs the same for every competitor, the differentiator shifts to how each company observes its own process, models the specific use case, and iterates when the base model changes. That business knowledge, codified as an executable workflow, is not something Anthropic sells. It is the one thing that stays inside the company when the technical layer becomes a line item that looks identical for everyone.

Related Articles

A $1,500 cap on AI treats the symptom, not the cause

Uber capped AI spend at $1,500 per person and one company burned $500M on Claude in a month. The cap treats the symptom. The cause is agents turned loose with no scope.

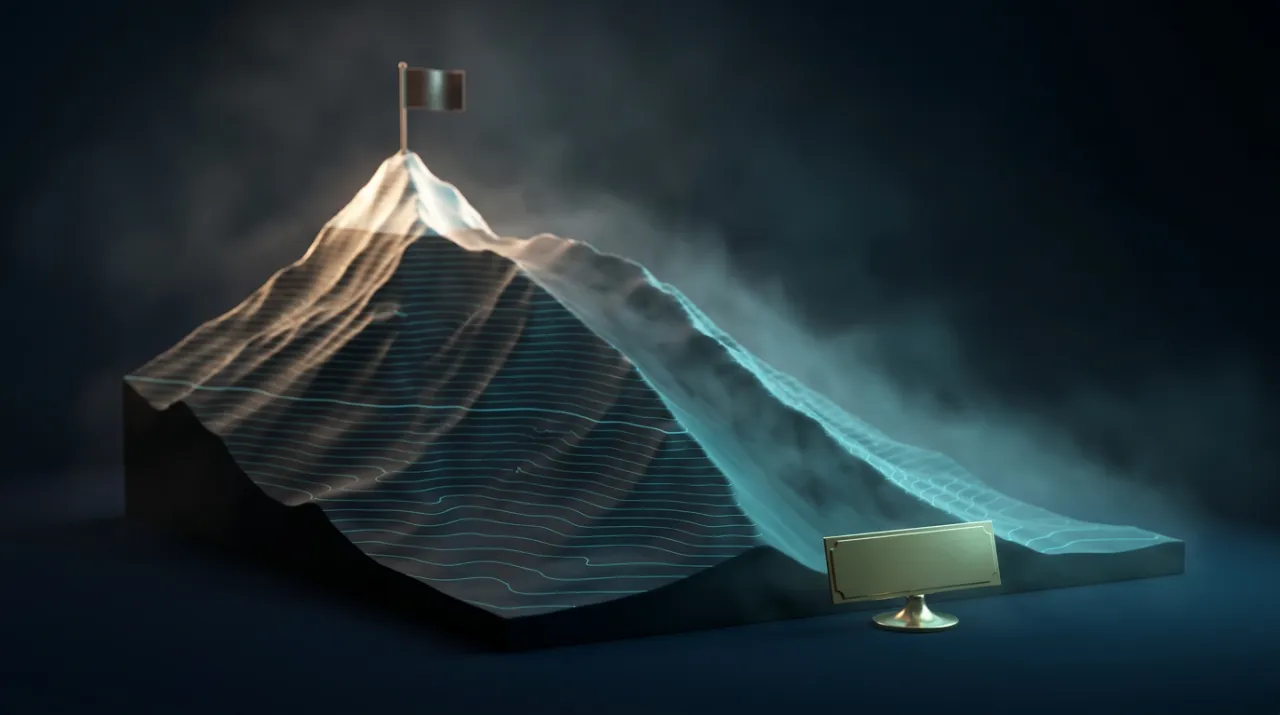

Peak AI confidence, and the downslope nobody owns

Building AI has never been cheaper, so the bet is to build. But 95% of pilots move no P&L, and in most companies nobody owns the downslope of the curve.