Google's AI Ecosystem: What Works for B2B, What Doesn't

Ricardo Argüello — March 1, 2026

CEO & Founder

General summary

Google has over 25 AI-related products with overlapping capabilities and a track record of renaming and discontinuing services with little notice. This is an honest map — not a sales guide — that identifies where Gemini and Vertex AI make sense for B2B and where the lock-in traps are.

- Google's AI ecosystem has 25+ products with overlapping capabilities — the first challenge is knowing where to start without losing three months on evaluation

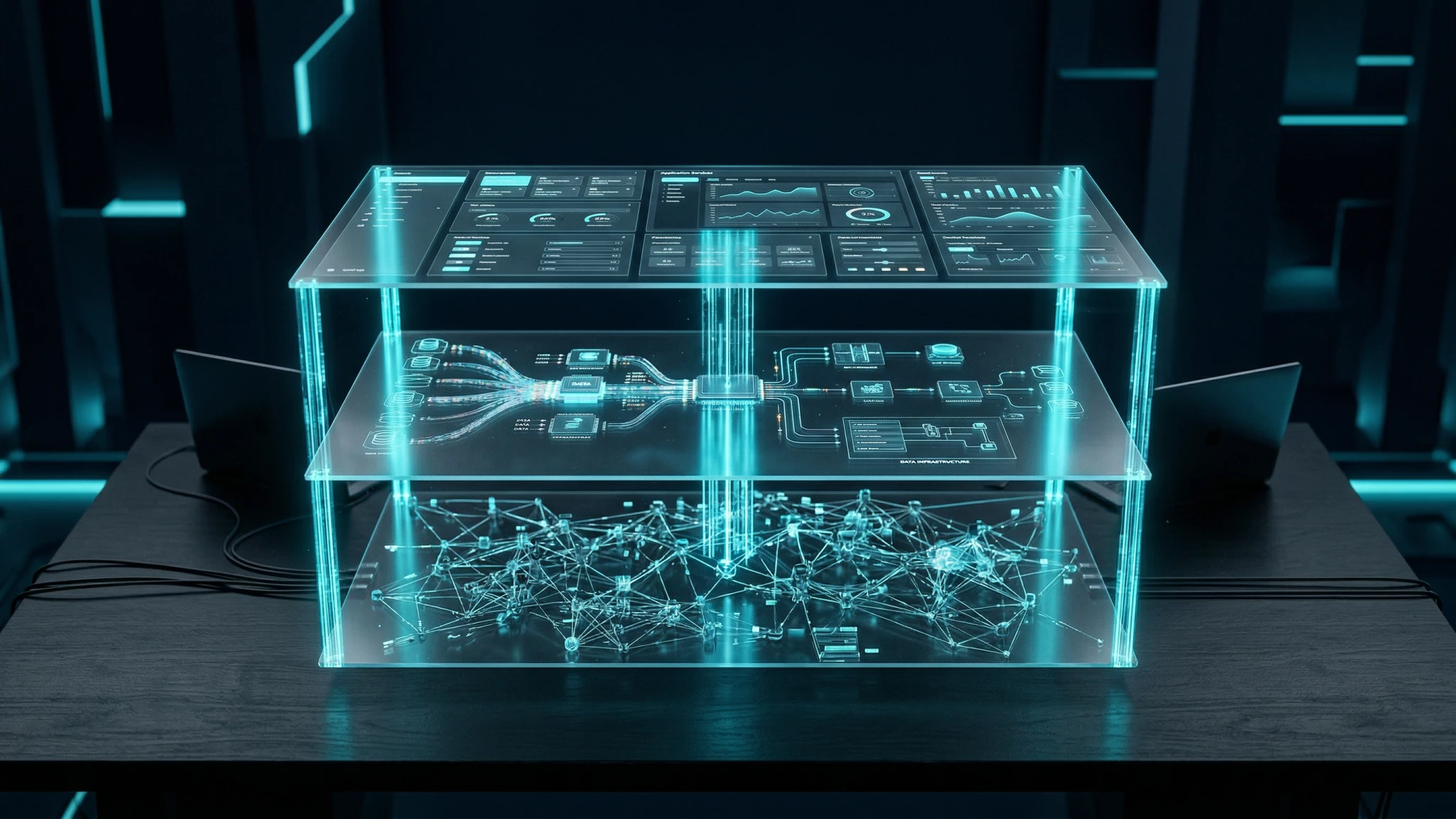

- The ecosystem divides into three layers: foundation models (Gemini), managed ML platform (Vertex AI), and pre-built solutions (Document AI, BigQuery ML)

- Gemini excels at multimodal processing and long context windows; Claude leads in reasoning; GPT-4 has the broadest plugin ecosystem

- Google's history of renaming and killing products (PaLM to Gemini, Bard to Gemini, AutoML Tables to Vertex AI) is a documented risk that requires abstraction layers

- If your data isn't already on GCP, the cost of moving it may outweigh the benefits of Vertex AI — the direct Gemini API avoids that lock-in

Imagine walking into a hardware store that has 25 different drills, many of which do similar things, and the store has a habit of renaming products and pulling some off the shelves without warning. That's Google's AI product line. Some of those drills are excellent for specific jobs, but you need a map to figure out which ones to buy and which ones to skip — and a plan for what to do if they discontinue the one you picked.

AI-generated summary

Google Has Too Many AI Tools — and That’s a Problem

Open the Google Cloud console and count: Vertex AI, Gemini API, BigQuery ML, Document AI, AutoML, Dialogflow, Contact Center AI, Healthcare AI, Retail AI, Vision AI, Natural Language AI, Translation AI, Speech-to-Text, Text-to-Speech…

That’s over 25 AI-related products, many with overlapping capabilities.

For a B2B company evaluating Google AI adoption, the first question isn’t “which one do I use?” but “where do I start without losing three months on evaluation?”

The problem gets worse because Google has a track record of renaming, merging, and discontinuing AI products at a pace that erodes trust. PaLM became Gemini. Bard became Gemini. AutoML Tables is now part of Vertex AI. Each rebrand means outdated documentation, forced migrations, and risk for companies that already implemented the previous version.

This article is an honest map. Not a Google sales guide — a practical assessment of what works, what doesn’t, and where the traps are.

Three Layers of Google’s AI Ecosystem

To make sense of the ecosystem without getting lost, it helps to divide it into three layers by level of control.

Layer 1: Foundation Models — Gemini

Gemini is Google’s model family, available in three tiers:

- Gemini Flash: the fast, economical model. Ideal for classification, data extraction, and high-volume tasks where latency matters more than sophistication

- Gemini Pro: the general-purpose model. Good for content generation, document analysis, and data reasoning with context windows up to 2M tokens

- Gemini Ultra: the most capable model. For complex reasoning tasks, advanced multimodal analysis, and problems requiring multi-step inference

Gemini’s real strength against competition is its native multimodal capability. It isn’t a text model with vision bolted on as a patch — it can process text, images, audio, and video within the same API call. For companies working with scanned documents, security video, or call center audio, this simplifies architecture considerably.

Where Gemini falls short: in our experience, complex instruction following and precision on tasks requiring step-by-step reasoning still don’t match Claude in several enterprise scenarios. And Google’s history of abrupt changes (deprecating models with little notice) creates legitimate uncertainty about long-term stability.

Layer 2: Platform Services — Vertex AI and Company

This is where Google adds value on top of the model:

- Vertex AI: the orchestration platform. Deploy models, create data pipelines, fine-tune, and monitor inferences. Google’s equivalent to Amazon SageMaker or Azure ML

- BigQuery ML: machine learning directly from SQL. If your data already lives in BigQuery, you can train classification, regression, and clustering models without moving data or switching tools

- Document AI: structured document extraction. Invoices, contracts, forms — converted into structured data with pre-trained or custom models

Layer 3: Pre-Built Solutions

Vertical products that Google packages for specific industries:

- Contact Center AI: call center automation with conversational agents

- Healthcare AI: medical image and clinical data analysis

- Retail AI: product personalization and demand forecasting

The problem with this layer: maximum lock-in, minimum portability. If you adopt Contact Center AI and later want to migrate, you’re essentially starting over. The data, flows, and integrations are proprietary.

Quick Comparison Across Layers

| Criterion | Layer 1: Models (Gemini) | Layer 2: Platform (Vertex AI) | Layer 3: Vertical Solutions |

|---|---|---|---|

| Control | High — choose model and configure | Medium — Google manages infra | Low — black box |

| Lock-in risk | Low if using direct API | Medium — pipelines tied to GCP | High — costly migration |

| Time to production | Weeks | 1-3 months | 2-6 months |

| Typical monthly cost | $500 - $5,000 | $2,000 - $20,000 | $10,000+ |

| Team required | Developers with API experience | ML engineers + DevOps | Certified implementers |

Where Google Actually Wins — and Where It Doesn’t

Where Google wins

BigQuery ML for companies already in BigQuery. If your business data already lives in BigQuery — and for many B2B companies that migrated to GCP in the last 5 years, it does — BigQuery ML removes the friction of moving data to another platform for machine learning. Train models with SQL, results stay where your data is. Simple and effective.

Document AI for structured document extraction. We’ve implemented Document AI on projects with our clients to process invoices, purchase orders, and contracts. Accuracy on semi-structured documents (PDFs with tables, scanned forms) is consistently good, especially with pre-trained models for standard document types.

Gemini Flash for high-volume, low-cost tasks. For email classification, entity extraction, and content categorization at scale, Flash offers the best cost-to-performance ratio on the market. In a project with an e-commerce client, the cost per processed document with Flash was ~40% lower than comparable models from other providers.

Grounding with Google Search. The ability to connect Gemini’s responses with live Google Search results is unique. For applications that need real-time factual information — competitor pricing, industry news, regulatory data — this adds a verification layer that other providers don’t have.

Where Google doesn’t win

Product stability track record. This is the elephant in the room. Google has discontinued, renamed, or merged more AI products in the last three years than any other provider. The Google product graveyard is long and well-documented. For a company planning a 12-24 month investment in integration, this instability is a real risk.

Enterprise support. Compared to the Azure support experience (with dedicated account teams) or even Anthropic (with direct technical support for enterprise customers), Google Cloud support still feels transactional. When you have a production issue at 2 AM, the difference matters.

Complex reasoning and instruction following. For tasks requiring multi-step reasoning, extensive legal document analysis, or precise following of complex instructions, Gemini Pro still has gaps compared to the best competing models. It improves with each version, but the gap exists.

The “Already on GCP” Factor

Let’s be honest here: if your company already runs on Google Cloud Platform, Google’s AI ecosystem has advantages no competitor can match. Native integration with your data in BigQuery, your buckets in Cloud Storage, your networks in VPC — everything flows without additional configuration.

But if you’re not on GCP, those advantages vanish. And migrating to GCP just for AI tools rarely justifies itself.

Lock-in Risk in Google’s Ecosystem

Lock-in with Google operates on four levels:

Level 1 — Data: BigQuery ML requires your data in BigQuery. Document AI processes documents stored in Cloud Storage. Vertex AI Pipelines reads GCP datasets. Moving your data to another provider has a real cost in time, transfer, and reprocessing.

Level 2 — Pipelines: Vertex AI pipelines are written in Google’s proprietary format. You can’t export a Vertex AI pipeline and run it in AWS SageMaker or Azure ML without rewriting it.

Level 3 — Trained models: If you fine-tune a model in Vertex AI, that model stays in Vertex AI. AutoML also doesn’t allow exporting trained models to another environment. Your training investment is trapped.

Level 4 — Integrations: Every connector, webhook, and trigger you configure within Google’s ecosystem is work that’s lost if you migrate.

How to Mitigate Lock-in

The strategy we recommend to our clients:

- Use the direct Gemini API whenever possible, not Vertex AI, unless you need fine-tuning or complex pipelines. The API is portable — switching from Gemini to another provider only requires changing the endpoint and adapting the format.

- Implement abstraction layers between your application and the AI provider. MCP servers work exactly for this: they abstract the connection between your business logic and the AI model

- Keep your data in portable formats. If you use BigQuery, export regular copies in Parquet or CSV. If you train models, document datasets and parameters so you can replicate training on another platform

- Evaluate alternatives continuously. As we covered in our AI vendor selection guide, evaluation isn’t a one-time event — it’s a quarterly process

How to Evaluate Whether Google AI Is Right for Your Company

Before investing time in proofs of concept, answer these five questions:

Question 1: Where does your data live today?

If the answer is Google Cloud Platform — BigQuery, Cloud Storage, Firestore — Google’s AI ecosystem is the natural candidate. Native integration saves weeks of engineering work.

If your data is on AWS, Azure, or on-premises, the integration advantages disappear. The Gemini API is still a valid option, but platform services (Vertex AI, BigQuery ML) lose their main edge.

Question 2: What type of processing do you need?

- Multimodal (text + image + audio + video): Google has the advantage. Gemini handles this natively.

- Text-only with complex reasoning: Evaluate Claude and GPT-4 first. Gemini is improving but doesn’t lead this category yet.

- Traditional ML (classification, regression, clustering): BigQuery ML is hard to beat if you’re already in BigQuery.

- Document extraction: Document AI is competitive. But also evaluate alternatives like Amazon Textract or open source solutions.

Question 3: How comfortable is your team with GCP?

Google’s tools require familiarity with its ecosystem. IAM policies, service accounts, VPC networking, billing alerts — if your team already masters GCP, the learning curve is minimal. If not, add 4-8 weeks of onboarding before seeing results.

Question 4: How much portability do you need?

If your company operates in industries where switching AI providers may be necessary (due to regulation, costs, or strategy), prioritize Layer 1 (direct Gemini API) over Layers 2 and 3. Every layer you add reduces your ability to migrate.

Question 5: What’s your timeline?

- Need results in 2-4 weeks: Direct Gemini API with well-designed prompts

- Can invest 1-3 months: Vertex AI with pipelines and monitoring

- 6+ month project: Pre-built vertical solutions (only if the industry and ROI justify it)

Reference Architecture: Google AI in a Multi-Vendor Strategy

The strategy that has worked best for our B2B clients isn’t choosing a single provider — it’s using each provider where it performs best.

Assignment by Use Case

| Use case | Recommended provider | Reason |

|---|---|---|

| Multimodal processing (image + text + video) | Gemini Pro/Flash | Native multimodality, competitive cost |

| Complex reasoning, legal analysis, writing | Claude | Better instruction following, more precise reasoning |

| Consumer-facing chat with plugins | GPT-4 | Broadest plugin ecosystem, brand recognition |

| ML on BigQuery data | BigQuery ML | Zero friction if data is already there |

| Structured document extraction | Document AI or Claude | Depends on volume and document type |

| Responses with real-time data | Gemini with grounding | Google Search grounding is unique |

MCP Servers as an Abstraction Layer

The piece that makes this multi-vendor strategy viable is an abstraction layer between your application and the models. MCP servers allow your application to connect to multiple AI providers through a standard protocol, without coupling to any one.

In practice, this means you can:

- Switch from Gemini to Claude for a use case without modifying your application

- Run the same prompt against multiple models to compare results

- Implement automatic fallbacks if a provider goes down

Cost Comparison for a Typical B2B Workload

For a use case processing ~100,000 documents per month with ~500 average tokens per document:

| Model | Input cost (per 1M tokens) | Output cost (per 1M tokens) | Estimated monthly cost |

|---|---|---|---|

| Gemini Flash | $0.075 | $0.30 | ~$19 |

| Gemini Pro | $1.25 | $5.00 | ~$313 |

| Claude Haiku | $0.25 | $1.25 | ~$75 |

| Claude Sonnet | $3.00 | $15.00 | ~$900 |

| GPT-4o-mini | $0.15 | $0.60 | ~$38 |

Note: approximate prices as of March 2026. Prices vary by volume, region, and enterprise agreement type.

The point isn’t that one provider is “better” — it’s that the right choice depends on the use case and volume. Gemini Flash for massive classification tasks. Claude Sonnet for analysis requiring precise reasoning. The multi-vendor architecture optimizes costs without sacrificing quality.

What We Tell Our Clients About Google AI

Google’s AI ecosystem is a messy drawer with excellent tools inside. BigQuery ML is hard to beat for tabular data on GCP. Document AI handles document extraction well. Gemini Flash has the best cost-to-performance ratio for high-volume tasks. And Gemini’s native multimodal capability is genuinely differentiating.

But we also tell them what Google won’t: the product stability track record creates real risk, platform-layer lock-in is significant, and enterprise support still isn’t at the level of some competitors.

The recommendation that has delivered the best results: use Google AI where it has clear advantages (data on GCP, multimodal, high volume), but don’t bet everything on a single provider. A well-designed API strategy with abstraction layers gives you the best of each ecosystem without getting trapped in any one.

Want to evaluate how Google AI fits into your current stack? Reach out for a multi-vendor architecture assessment — we analyze your infrastructure, your use cases, and design the combination that optimizes results and reduces risk.

Frequently Asked Questions

It depends on your current infrastructure. If you already run on Google Cloud and need data pipelines, fine-tuning, and integrated monitoring, Vertex AI makes sense. If you only need text generation or multimodal analysis capabilities, the direct Gemini API is simpler, cheaper, and avoids GCP lock-in.

Yes, but with caveats. The Gemini API works independently of your cloud infrastructure. However, services like BigQuery ML, Document AI, and Vertex AI Pipelines assume your data is already in GCP. If you use AWS or Azure, the cost of moving data and the complexity of multi-cloud operations may outweigh the benefits.

Each model has distinct strengths. Gemini excels at native multimodal processing and long context windows (up to 2M tokens). Claude leads in reasoning, complex instruction following, and data privacy. GPT-4 has the broadest plugin ecosystem. The best strategy for most B2B companies is multi-vendor: use each model where it performs best.

This is a documented risk — Google has discontinued or renamed multiple AI products in the last three years (PaLM → Gemini, Bard → Gemini, AutoML Tables → Vertex AI). To mitigate it, isolate integrations behind abstraction layers, avoid dependencies on exclusive features without alternatives, and maintain a contingency plan with at least one evaluated alternative provider.

Related Articles

We're AI Consultants. Sometimes We Say: Don't Use AI

An AI consultancy telling clients 'skip the AI' sounds contradictory. But it's the most valuable thing we do.

The 100x Employee Already Exists (And Changes How You Hire)

One AI-literate professional now produces what used to take a team. Jensen Huang confirmed it at GTC 2026. Here's what it means for your hiring strategy.