Your AI Agent Is Free. Deploying It to Production Isn't.

Ricardo Argüello — March 13, 2026

CEO & Founder

General summary

Every week a client sends us a link to an open-source AI agent framework. The code is free and it works. The problem starts when you want to deploy it to production with security, monitoring, and someone who picks up the phone at 2 AM. It's the same Heroku pattern with Rails, Vercel with Next.js — the tool is free, but the infrastructure isn't.

- Open-source AI agent frameworks have a deployment barrier that locks out the B2B teams who would benefit most

- The Heroku pattern repeats: every open-source tool requiring server configuration eventually gets a managed layer on top

- Managed platforms solve the symptom (deployment complexity) but not the root cause (lack of infrastructure expertise)

- The real question isn't self-host vs. managed — it's who controls your AI infrastructure and what happens when something breaks

Imagine someone gives you a free sports car. Keys and all. But you need to build your own garage, your own gas station, and hire a mechanic who's available when it breaks down at midnight. The car cost you nothing — everything else did. That's exactly what happens with open-source AI agent frameworks: the code is free, but making it run in your company requires infrastructure, security, and support that someone has to build.

AI-generated summary

Every week we get the same message: a link to an open-source AI agent framework, followed by “it’s free — why would we pay for something that already exists?”

The framework works. Runs perfectly on localhost. The README takes you from zero to docker-compose up in 15 minutes. Tests pass.

Then someone tries to connect it to a corporate Active Directory, manage API keys for five different AI providers, and make sure customer data doesn’t leave the region. That’s where “free” ends.

It’s the same pattern the software industry has repeated for 20 years — and now it’s AI agents’ turn.

A Pattern You Already Know (If You Remember Heroku)

In 2007, Ruby on Rails was free and it transformed web development. But deploying it to production required knowing how to configure Linux servers, load balancers, and clustered databases. Heroku showed up with git push heroku main and charged to remove that friction.

The same cycle repeated: Next.js → Vercel, static sites → Netlify, Terraform → HCP after HashiCorp’s license change. The pattern has three phases: an open-source tool gains traction, enterprise teams discover that the basic installation isn’t enough for production, and someone builds a managed layer on top that charges to handle the complexity.

Now it’s happening with AI agent frameworks. Aakash Gupta identified it this week: OpenClaw, an open-source agent framework, requires Node.js 18+, Docker, multi-provider API key management, and server hardening. FlashClaw appeared offering one-click deploys.

It’s not just OpenClaw. CrewAI, AutoGen, LangGraph — they all have the same gap between “works on my laptop” and “works in production with my company’s security policies.” CrowdStrike has documented misconfigured agent instances becoming attack surfaces — the canary in the coal mine for this trend.

If you’re still evaluating WHAT agents can do for your operations, start with our enterprise agents playbook. This article assumes you’ve already decided to use them and asks: how do you deploy them?

What the README Won’t Tell You About Production

Every agent framework ships with a README that takes you from zero to “it works” in 15 minutes. What it doesn’t cover is what happens after those 15 minutes:

| What you download | What production requires |

|---|---|

docker-compose.yml | Container orchestration with health checks and restart policies |

.env.example | Secrets management with automatic rotation and audit trails |

| Localhost testing | TLS termination, configured firewalls, DDoS protection |

| Single-user mode | Multi-tenant isolation with access control |

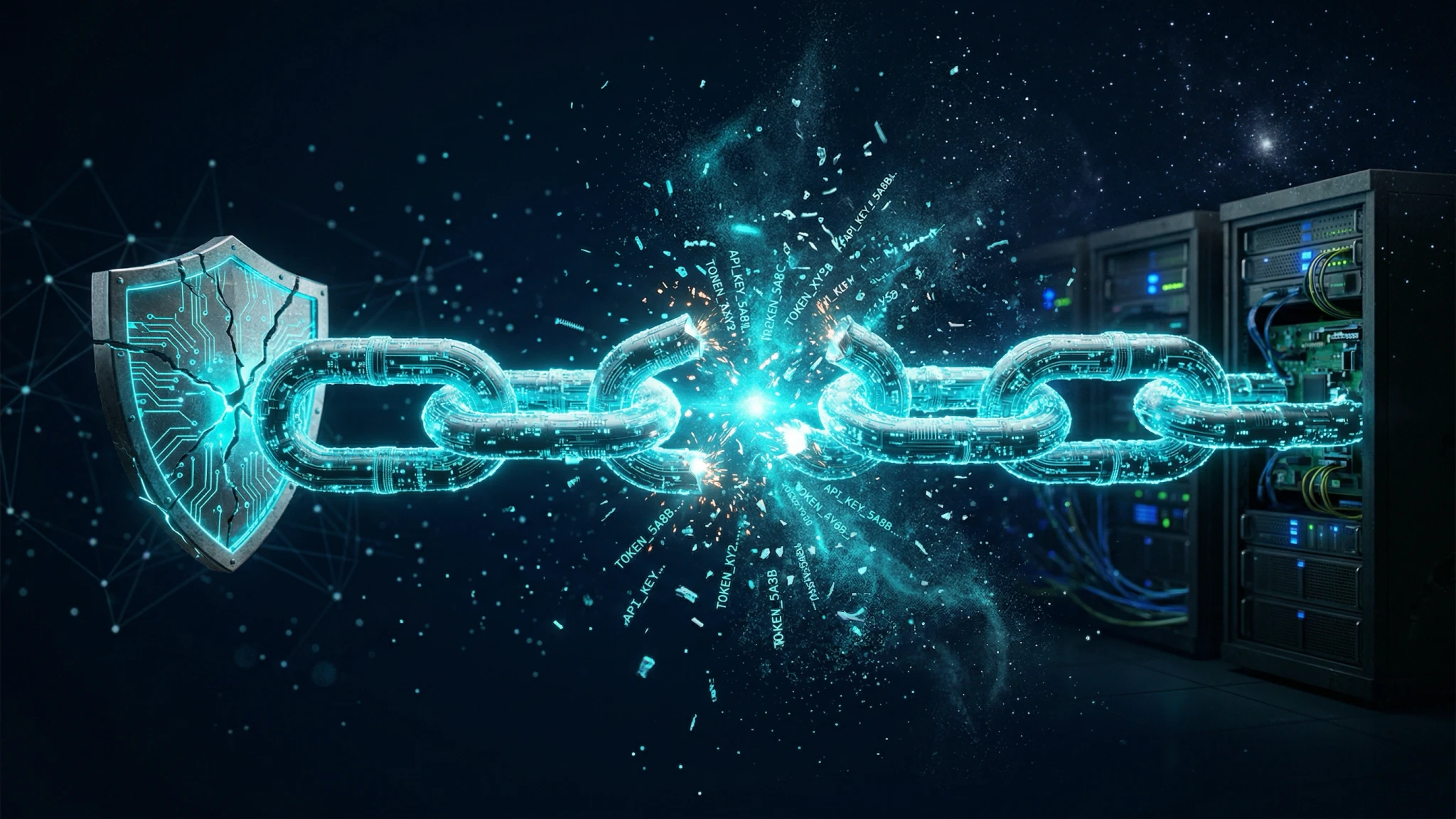

API Keys Are the New Attack Perimeter

An agent framework connected to 5 or 10 AI providers means 5 or 10 sets of API keys, each with different rotation policies, rate limits, and billing models. OpenAI rotates differently from Anthropic, which rotates differently from Cohere.

In our experience, ~80% of the teams we evaluate store these keys in .env files without encryption or rotation. A single compromised file exposes access to every provider at once — made worse by the fact that AI keys typically have access to models processing sensitive data.

The OWASP Top 10 for LLM Applications already lists inadequate credential management as a priority attack vector.

The Agent That Works on Your Laptop Doesn’t Work at 2 AM

On your laptop, if an AI provider goes down, you restart the process. In production at 2 AM, you need monitoring to detect the failure, alerts to notify the right team, fallback logic to switch to an alternative provider, and enough logs to diagnose what happened the next morning.

None of that ships with the framework. Monitoring and alerting, auto-restart policies, graceful degradation when a provider goes down — you build all of it yourself. Or you don’t, and you find out when something fails at the worst possible time.

Three Paths, Three Trade-offs

Three options, each with real trade-offs — none of them universally right.

Self-hosting: Full Control, Full Cost

It makes sense when your team includes dedicated platform engineers, when regulations require you to keep everything on-premise, or when you need deep customization of the framework.

The real cost isn’t the servers — it’s the people. The Puppet State of DevOps Report consistently documents that engineering teams spend ~35% of their time on infrastructure tasks instead of product development. Adding an agent framework to that workload increases it without adding capacity to the team.

Being honest: out of the last 50 clients who’ve evaluated AI agents with us, maybe 3 genuinely needed to self-host. The other 47 thought they did because they didn’t know the alternatives.

Managed Platforms: Speed With Fine Print

FlashClaw, LangSmith, and various agent-as-a-service platforms solve the deployment friction immediately. You upload your configuration, connect your providers, and in 20 minutes you have an agent running. The time-to-value is real.

The fine print:

Data residency. Where do your prompts and agent responses go? If your company handles client data subject to local privacy regulations, this question isn’t optional.

Vendor lock-in. The proprietary orchestration layers that make deployment easy also make migration hard. Your agent configuration lives in their format, on their infrastructure, under their terms. The day you want to switch platforms, you discover your agent logic is tied to their abstractions.

Pricing. One client started at $180/month during their pilot. Six months later, processing real volumes, the invoice was $4,200. The model works until it scales.

Technical Partner: Someone Who Knows Your Infrastructure

The third option most companies don’t consider: a technical partner who deploys the open-source tool on YOUR infrastructure, configured to YOUR security policies, and is available when something breaks.

Last quarter we deployed an open-source agent framework for a logistics client in Central America. Installing the framework itself took 2 hours. The security configuration, monitoring, and integration with their existing Azure AD took 3 weeks. That ratio — hours of installation versus weeks of hardening — is consistent across every project we’ve done.

If you’re also evaluating which AI providers to connect to your agents, our vendor selection framework covers the data governance side.

The Question Nobody Asks

The usual debate centers on “self-host vs. managed platform.” But that’s arguing about the symptom, not the cause.

The real question is: who controls your AI infrastructure, and what happens when something breaks at 2 AM?

Managed platforms handle the deployment. But when something breaks at 2 AM — and it will — whoever picks up the phone needs to know your infrastructure, not just their dashboard.

Before choosing a path, answer these four questions:

- Can your team configure container orchestration with health checks and automatic restarts?

- Do you have a secrets management system with rotation and audit trails, or do you use

.envfiles? - Is there monitoring with alerts configured for the services running your agents?

- Do you have an incident response runbook for when an AI provider goes down or an agent produces incorrect results?

If your team answers yes to all four, you can probably self-host.

If you can’t answer three out of four, you need help — either a managed platform or a technical partner. The difference between the two: the managed platform owns your stack. A technical partner makes sure you own it.

What We Do When a Client Brings Us an Open-Source Agent Framework

Most clients who reach this point ask the same thing: “what happens if I send you the link?” Here’s the actual process:

Security audit of the deployment configuration. We review the Dockerfile, docker-compose.yml, and any infrastructure scripts — checking whether containers run as root, whether ports are unnecessarily exposed, and whether base images are current.

API key management design. We replace the .env.example with a real system: a vault for secrets with scheduled rotation, scoped to least-privilege per provider.

Monitoring, alerting, and incident runbooks. We connect the framework to the client’s existing observability infrastructure — agent health metrics, response latency, error rate by provider, accumulated daily costs. Then we document what to do when each component fails: provider down, agent stuck in a loop, costs spiking from a misconfigured prompt.

Handoff to the client team. We transfer everything — code, configuration, knowledge. The client owns their infrastructure. We stay available for support, but ownership is theirs.

We wrote about evaluating the CODE QUALITY of open-source tools here. This is the other side: evaluating DEPLOYMENT readiness.

If you currently have a docker-compose.yml from an AI agent framework open in a tab and you’re wondering how to get it to production with your company’s security policies, that’s exactly the conversation we have every week. Send us the repo link — we’ll tell you what deployment looks like for your specific environment.

Frequently Asked Questions

The Heroku pattern describes a recurring cycle in technology: an open-source tool gains popularity but requires server expertise to deploy, and eventually a managed platform appears that charges to remove that friction. It happened with Rails and Heroku, Next.js and Vercel, and now it's happening with AI agent frameworks. The code is free; the infrastructure to run it in production is not.

It can be, but it requires significant work beyond installation. You need secrets management with automatic rotation, isolated containers, monitoring with alerting, configured firewalls, and an incident response plan. If your team lacks DevOps and container security experience, self-hosting multiplies the risk instead of reducing it.

A managed platform hosts your agent on their infrastructure: fast deployment, but your data passes through their servers and you depend on their pricing and policies. A technical partner deploys the agent on your infrastructure, configured to your security policies, and hands you the controls. The key difference: with a managed platform, they own the stack. With a technical partner, you own it.

The framework itself is free, but production deployment typically costs between $5,000 and $25,000 USD depending on complexity. That includes container security, API key management, monitoring, and integration work with existing systems. In our experience, installing the framework takes hours; the security hardening and integration take weeks.

Related Articles

LiteLLM Attack: Your AI Trust Chain Just Broke

LiteLLM, the AI API key proxy with 97 million monthly downloads, was poisoned via PyPI. Your security scanner was the entry point.

Google Stitch + AI Studio: Design-to-Code Without Engineers

Google shipped a full design-to-production pipeline with Stitch and AI Studio. Where it works for B2B prototypes and where you still need real engineering.