LiteLLM Attack: Your AI Trust Chain Just Broke

Ricardo Argüello — March 25, 2026

CEO & Founder

General summary

On March 24, 2026, LiteLLM versions 1.82.7 and 1.82.8 on PyPI were poisoned with malware that auto-executed whenever Python started. The attackers (TeamPCP) first compromised Trivy, a security scanner, then used stolen credentials to hijack LiteLLM — the AI API key proxy with ~97 million monthly downloads. The malware harvested SSH keys, cloud credentials, Kubernetes secrets, and .env files. It was only caught because the payload was so inefficient it crashed a developer's machine.

- TeamPCP first compromised Trivy (Aqua Security's vulnerability scanner) and used those credentials to poison LiteLLM on PyPI — the protection tool became the attack vector

- The malware used a .pth file that auto-executed every time Python started, without requiring any LiteLLM import

- The payload harvested SSH keys, AWS/GCP/Azure credentials, Kubernetes configs, crypto wallets, and .env files

- TeamPCP crossed 5 ecosystems (GitHub Actions, Docker Hub, npm, OpenVSX, PyPI) in just 5 days, using stolen credentials from each to access the next

- It was caught only because a developer using an MCP plugin in Cursor noticed their machine running out of RAM — no scanner detected it

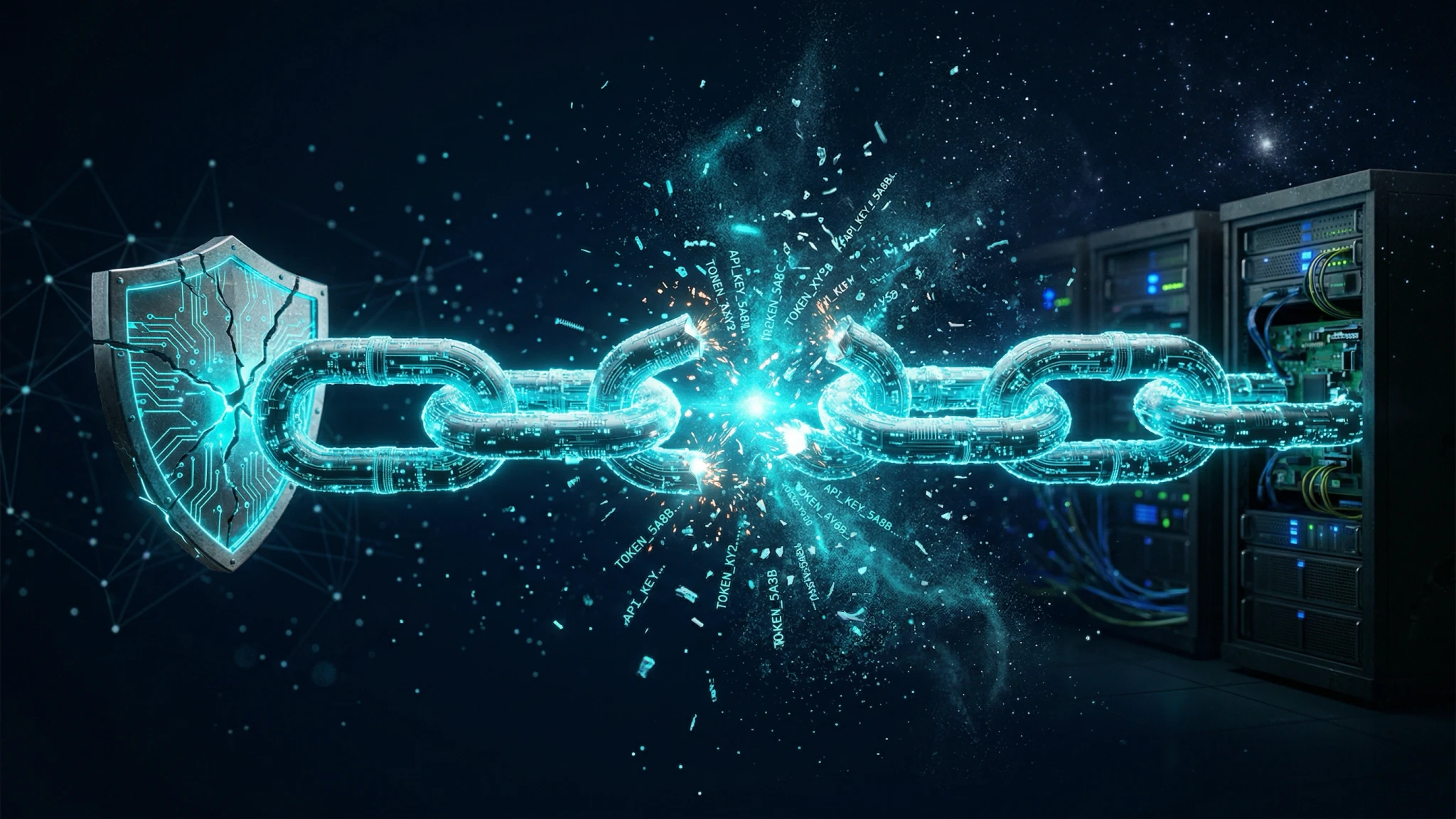

Think of a digital keyring that holds all your AI service keys: OpenAI, Anthropic, Google, Amazon. One day, someone swaps it with an identical keyring that works exactly the same — but also sends a copy of every key to a stranger. And to swap your keyring, they first broke into the alarm system that was supposed to protect it. That's exactly what happened with LiteLLM.

AI-generated summary

On March 24, 2026, someone poisoned LiteLLM on PyPI.

If you haven’t used LiteLLM, it’s the most popular Python package for managing API keys across multiple AI providers. A single proxy that sits between your application and OpenAI, Anthropic, Google, Amazon — all at once. It has over 40,000 stars on GitHub and ~97 million monthly downloads. According to Wiz, it’s present in 36% of cloud environments.

Versions 1.82.7 and 1.82.8 included a .pth file that auto-executed every time Python started on that machine. You didn’t need to import LiteLLM. You didn’t need to call any function. As long as Python existed on your system, the malware ran.

What did it collect? SSH keys, AWS/GCP/Azure credentials, Kubernetes configs, git tokens, .env files with all your API keys, shell history, crypto wallets, SSL private keys, CI/CD secrets, and database passwords. Everything encrypted with AES-256 and exfiltrated to an attacker-controlled domain.

How was it caught? Because the malware was so inefficient it crashed a machine. Andrej Karpathy amplified it: a researcher at FutureSearch was running an MCP plugin inside Cursor that pulled in LiteLLM as a transitive dependency. When version 1.82.8 installed, the machine ran out of RAM. He investigated. Found the payload.

No security tool caught it. It was discovered by accident.

The security scanner was the attack vector

The attackers — a group called TeamPCP — didn’t go after LiteLLM directly. They first compromised Trivy, Aqua Security’s vulnerability scanner. On February 28, an automated bot exploited a workflow vulnerability in Trivy’s CI/CD pipeline and stole a personal access token.

Aqua fixed the surface-level damage but didn’t rotate all credentials. TeamPCP kept the access.

LiteLLM used Trivy in its own CI/CD pipeline to scan for vulnerabilities. When the pipeline ran with the compromised Trivy action, the PyPI publishing token was exposed. With that token, TeamPCP published the malicious versions directly to PyPI — no changes to the GitHub repository, no trace in the source code.

| What you assume | What actually happened |

|---|---|

| Security scanner protects the supply chain | Security scanner was the entry point |

| PyPI package matches GitHub source | Package was replaced without touching the repo |

pip install just downloads code | pip install auto-executed malware |

| Credential management tool protects credentials | Credential management tool harvested them |

This wasn’t an isolated incident. According to SecurityWeek, TeamPCP crossed 5 ecosystems in 5 days: GitHub Actions, Docker Hub, npm, OpenVSX, and PyPI. Each compromise gave them the credentials to open the next. The incident was assigned CVE-2026-33634 with a CVSS score of 9.4.

Why this is different from XZ Utils

In February we wrote about open-source AI risks and vibe coding, using the XZ Utils backdoor (CVE-2024-3094) as a supply chain attack example. Good example. But LiteLLM is a different kind of problem in three ways.

XZ Utils was general Linux infrastructure — a compression library present on millions of servers. LiteLLM is AI-specific infrastructure. It doesn’t manage generic data; it manages the credentials that connect your organization to every AI provider you use. The XZ Utils attacker wanted server access. TeamPCP wanted the keys to your AI infrastructure.

The XZ Utils attacker spent two years building trust within the project before acting. TeamPCP crossed five ecosystems in five days. AI infrastructure is so new and so poorly guarded that speed, not patience, is the winning strategy.

Neither was caught by security tooling. XZ Utils was discovered by a developer who noticed 500ms of extra latency. LiteLLM was discovered because the malware was written so poorly it crashed a machine. If the payload had been 10% more efficient, nobody notices for weeks.

When the ecosystem shifts under your feet

At 15, I co-founded Word Magic Software with my father — a translation and dictionary software that evolved from DOS to Windows and was featured by Apple worldwide. The day Google Translate became viable, Word Magic still worked perfectly. Our code didn’t have a single new bug. But the ecosystem we depended on — users installing desktop software for translations — evaporated.

The LiteLLM case is different in cause but identical in structure: the package worked fine. What failed was the layer that delivered it to your machine. In both cases, the tool wasn’t the problem. It was the link nobody was watching.

Tools are temporary. The trust chains that deliver them are too.

Most companies have nobody auditing this

The same week LiteLLM was attacked, Jason Shuman, GP at Primary VC, published data that confirms it: 54% of SMBs lack internal AI expertise. 41% have data quality too poor for AI to work. And 41% already prefer buying AI through a local IT provider rather than self-serve.

His thesis: while consumer AI marketing sells agents as $20/month subscriptions, real businesses are paying $10,000 to get them configured properly.

That’s exactly what we do at IQ Source for companies across Latin America. The AI technology is the same in San José, Costa Rica, as it is in San Francisco — same models, same APIs, same frameworks. What changes is who implements it, who audits the dependencies, and who responds when something like LiteLLM happens in your stack.

You can’t “1-click install” AI security. If your security posture for AI infrastructure is “we installed the popular package and moved on,” you’re in the majority. And the majority just got hit.

Anatomy of an AI trust chain

Your application doesn’t talk directly to OpenAI or Anthropic. Between your code and your AI providers sits a chain of intermediaries, and each one is a trust assumption:

Your app → LiteLLM (proxy) → AI Providers (OpenAI, Anthropic, Google...)

Your app → pip install → PyPI → package maintainer → their CI/CD pipeline

Your pipeline → security scanner → the scanner's own dependenciesEach arrow is a link that can break. Most companies audit the first chain: which AI providers they use, which models, what SLAs they have. Almost nobody audits the second or third.

While evaluating an open-source project requires looking at the codebase itself, securing an AI proxy requires interrogating the intermediaries:

- Who maintains the packages that sit between your code and your AI provider?

- What happens if one of those intermediaries gets compromised?

- Do you pin exact versions with verified hashes, or always install latest?

- When was the last time anyone on your team verified that

pip install packageactually installs the code from the GitHub repo? - Is your security scanning tool just another dependency in your pipeline, or do you evaluate it with the same rigor as the code it scans?

If you can’t answer more than two, your trust chain has blind spots.

What we do at IQ Source when a client uses AI proxies

This isn’t generic “update your dependencies” advice. This is what we specifically review when a company uses LiteLLM or any proxy that centralizes AI credentials:

Dependency lockdown. We pin exact versions with verified hashes. Nothing auto-updates in packages that manage credentials. Every update goes through manual diff review between versions before hitting production.

Credential isolation. No single package gets access to all your AI API keys at once. We segment by environment (development, staging, production) and by use case. If one environment gets compromised, the blast radius stays contained.

Trust chain audit. We map every intermediary between your code and your providers. How many packages sit in between? Which ones auto-execute code on install? Which ones depend on security tools that could, ironically, be the attack vector?

Detection that doesn’t rely on luck. The LiteLLM malware was caught because it crashed a machine. That’s not a strategy. We implement behavioral monitoring: what does normal traffic from your AI proxy look like? Alerts trigger when the pattern shifts — not when the attacker makes a mistake.

If your team uses LiteLLM or any Python package managing AI credentials, we wrote about the risks of deploying AI frameworks to production that apply directly here.

Send us your requirements.txt at contact. We audit your trust chain in 2 hours and tell you exactly which links are vulnerable.

Frequently Asked Questions

On March 24, 2026, the TeamPCP group poisoned LiteLLM versions 1.82.7 and 1.82.8 on PyPI, injecting malware that harvested AI credentials, SSH keys, and Kubernetes secrets. LiteLLM is an AI API key proxy with ~97 million monthly installs. The incident was assigned CVE-2026-33634 with a CVSS score of 9.4.

TeamPCP first exploited a vulnerability in Trivy's CI/CD pipeline — Aqua Security's vulnerability scanner — and stole credentials. Because LiteLLM used Trivy in its own pipeline, the stolen credentials included the PyPI publishing token. With that token, TeamPCP published malicious versions directly to PyPI without touching the GitHub repository.

An AI trust chain is every dependency between your application and your AI providers: the proxy managing API keys, the package manager that installs it, and the security tools that scan it. Each link is an unverified trust assumption. The LiteLLM attack showed that attackers target intermediate links, not the visible endpoints.

Four concrete actions: pin exact package versions and verify hashes before updating, isolate AI credentials by environment so no single package accesses all keys at once, audit the trust chain by mapping every intermediary between your code and your providers, and implement behavioral monitoring that detects anomalies without relying on the attacker making mistakes.

Related Articles

Google Stitch + AI Studio: Design-to-Code Without Engineers

Google shipped a full design-to-production pipeline with Stitch and AI Studio. Where it works for B2B prototypes and where you still need real engineering.

The Software Lifecycle Collapsed. Your Process Didn't.

Karpathy codes in English, 100% of Nvidia uses AI coding tools, Boris Cherny hasn't coded in months. The SDLC collapsed. What replaces it now.