Karpathy's AI Exposure Map: All 342 Occupations Scored

Ricardo Argüello — March 16, 2026

CEO & Founder

General summary

Karpathy scored all 342 BLS occupations on a 0-10 AI exposure scale. If the work output is digital and can be done remotely, exposure is high. ~25M jobs in the 8-10 zone, with $3.7T in linked wages.

- 0-10 scale applied to all 342 Bureau of Labor Statistics occupations, using an LLM evaluator with a standardized rubric

- The two-variable rule: if the output is digital and the work can be done remotely, AI exposure is high — regardless of industry

- Weighted average 4.9 vs. unweighted 5.3: highly-exposed jobs employ fewer people but pay more per person

- Paralegals (8-9), software developers (8-9), data analysts (8-9), but physicians (4-5) and nurses (4-5) due to required physical presence

- Exposure is not replacement: a score of 8 means the role changes, not that it disappears — companies need augmentation plans, not elimination plans

Every job at your company scored 0-10 based on what AI can do today — that's what Karpathy did for 342 occupations. Most companies focus AI strategy on engineering when exposure spans the whole org.

AI-generated summary

Andrej Karpathy — OpenAI co-founder, former Head of AI at Tesla, one of the most credible voices in deep learning — published an open-source project scoring all 342 Bureau of Labor Statistics occupations on a 0-to-10 AI exposure scale. The original repo was taken down, but a fork survives and the data spread widely — amplified by Linas Beliūnas, Tim Haldorsson’s interactive visualization, and Marc Andreessen.

The reaction was predictable: headlines about “jobs that will disappear” and ranked lists of the most affected. The problem is that most of those interpretations are wrong. What Karpathy measured isn’t which occupations die — it’s which are most exposed to what AI can already do. That difference separates companies that plan from companies that react.

What Karpathy Actually Did

The project takes the 342 standard occupational categories from the BLS — the same taxonomy the U.S. Department of Labor uses for employment and wage reporting — and runs them through an LLM with a standardized evaluation rubric. Each occupation gets a score from 0 (no exposure) to 10 (maximum exposure).

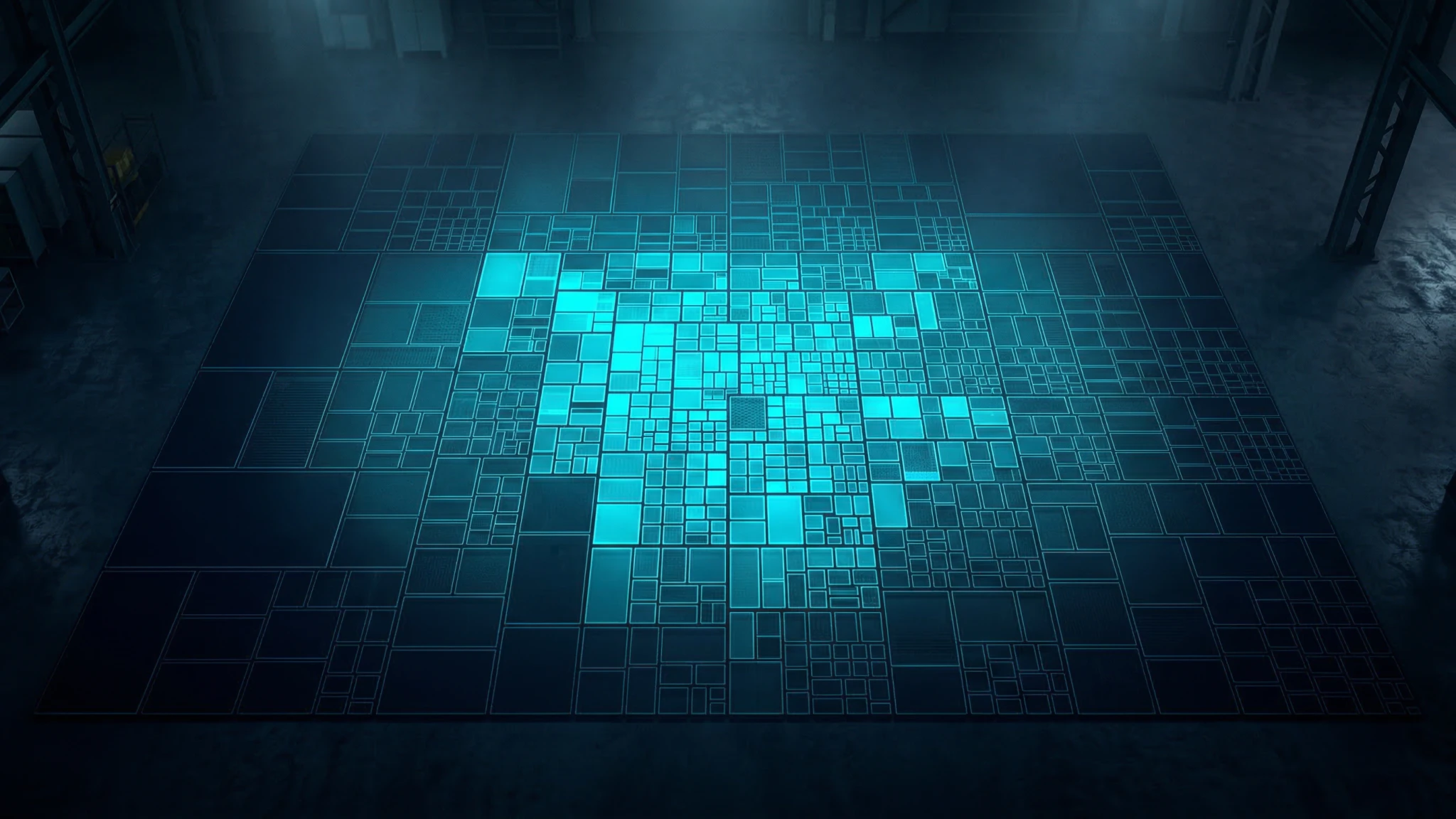

The output is a treemap: a visual map where each rectangle represents an occupation, its size reflects the number of employees, and its color represents exposure intensity. The data includes median wages, worker counts, and the assigned score. You can explore the interactive treemap at this live version of the fork (note: as an unofficial fork, it may be taken down at any time).

It’s not a predictive model. It doesn’t say when each job changes, or how many positions are lost. It’s a current-state snapshot: given what AI can do today, how exposed is each occupational category?

The credibility comes from two directions. First, Karpathy has the technical depth to design a solid rubric — he’s not an analyst repeating papers; he’s someone who built AI systems at scale. Second, the project uses the official BLS taxonomy, making the results comparable with any existing economic analysis.

The Two-Variable Rule

Across all 342 scores, a pattern emerges that reduces to two questions:

- Is the work output digital? — text, code, data, analysis, digital design

- Can the work be done remotely? — no required physical presence

The combination of both answers predicts the score with striking accuracy:

| Not remote | Remote | |

|---|---|---|

| Non-digital output | Roofers, janitors (0-1) | Rare category |

| Digital output | Nurses, physicians (4-5) | Software devs, paralegals (8-9) |

Roofers produce physical output and work on-site — AI has no entry point. Nurses produce partially digital outputs (clinical documentation) but require constant physical presence — AI enters the documentation side but doesn’t replace the care. Software developers produce 100% digital output and can work from anywhere — maximum exposure.

The lower-right quadrant (digital output + remote) concentrates the 8-10 scores. Not because those jobs are “easy” — many require years of training — but because the type of output they produce is exactly what language models and AI tools generate today.

At IQ Source, we apply a similar analysis when scoping AI integration projects — not at the generic occupation level, but at the process level within each client’s organization. The logic is identical: if a process output is digital and doesn’t require in-person judgment, there’s a concrete opportunity.

$3.7 Trillion Isn’t a Forecast

The most-shared number: $3.7 trillion in wages are tied to occupations in the 8-10 exposure zone. That represents ~25 million U.S. jobs.

Two nuances most analyses miss:

The weighted average is lower than the unweighted. The simple average across all 342 occupations is 5.3/10. When you weight by number of employees, it drops to 4.9. Why? High-exposure jobs (8-10) tend to employ fewer people but pay more per person. Medical transcriptionists score 10 but number ~55,000 in the U.S. Cashiers score low but number in the millions.

The $3.7T is a current-state measurement, not a projection. It doesn’t say “$3.7T in wages will be lost.” It says: “today, $3.7T in wage mass corresponds to occupations where AI has high intervention potential.” It’s a map of the territory, not a weather forecast.

The distinction matters because it completely changes what to do with the data. If you believe $3.7T is about to evaporate, the natural reaction is to protect yourself. If you understand it as $3.7T where AI already has intervention capability — then the question becomes where to invest first.

The Surprised and the Unsurprised

When most companies think about “AI replacing work,” they picture factory robots or call center chatbots. Karpathy’s map shows something different: the highest exposure is in roles most executives would classify as “hard-to-automate knowledge work.”

| Occupation | Score | Surprise? |

|---|---|---|

| Medical transcriptionists | 10 | No — 100% digital output, zero presence |

| Software developers | 8-9 | Partial — already visible with code copilots |

| Paralegals and legal assistants | 8-9 | Yes — many law firms haven’t internalized this |

| Data analysts | 8-9 | Partial — models already write SQL and Python |

| Accountants and auditors | 7-8 | Yes — accounting is perceived as “too regulated” |

| Technical writers | 8-9 | No — purely text-based output |

| Physicians | 4-5 | Yes, in reverse — many expected higher |

| Nurses | 3-4 | No — physical presence is the anchor |

| Electricians | 1-2 | No — manual work, site-specific |

| Construction managers | 3-4 | Partial — digital coordination but on-site supervision |

Paralegals are the revealing case. They spend ~60% of their time on document review — a digital, remote, repetitive output. A Thomson Reuters study already showed that AI tools in legal due diligence reduce review time by ~70%. Law firms not testing this will feel the impact abruptly.

Same with accountants. Manual audit of journal entries — consuming weeks at midsize firms — is exactly the type of task a language model with access to structured data handles well. It doesn’t replace the accountant — it frees them from mechanical verification to focus on interpretation and advisory.

If your company has more than 200 employees, our department-by-department AI guide details how augmentation opportunities vary across every functional area — from finance to operations.

How to Use This Map Inside Your Company

Karpathy’s data is useful but generic — it scores standardized occupations, not your specific processes. The real question is what you do with that information inside your organization.

Apply the two-variable test to your own roles. Take your org chart and for each position ask: is the primary output digital? Can it be done remotely? You don’t need an exact score — separating your roles into the four quadrants of the matrix already gives you a priority map. Roles in the high-digital + remote quadrant need an augmentation plan first.

Separate exposure from replacement. A score of 8 doesn’t mean “eliminate this position.” It means AI can significantly intervene in the tasks that role performs. A paralegal who goes from manually reviewing 200 documents to supervising an AI tool that reviews them is still a paralegal — but their productivity multiplies and the type of value they deliver changes. The company that trains that paralegal today has the advantage. The one that waits until cost pressure forces the decision loses talent and time.

Build augmentation plans before cost pressure forces cuts. The usual sequence is: competitor implements AI → margins compress → company cuts staff → discovers it has no one to operate the AI tools. That’s the wrong sequence. The right one is to invest in augmentation and training while margins allow it. We explored this in depth in our analysis of the engineering bifurcation paradox: talent that knows how to work with AI is separating fast from talent that doesn’t, and the gap widens every quarter.

At IQ Source, we help companies move from “we know which roles are exposed” to “we have an augmentation plan for each.” That includes identifying which processes are real candidates and which tools make sense for each team. We also define how to measure whether augmentation is actually working — not in theory, but with concrete productivity metrics.

What Karpathy Didn’t Measure

The map has real limitations worth naming.

No timeline. A score of 9 doesn’t say whether the impact arrives in 2026 or 2032. It measures current AI capability, not adoption speed — and adoption depends on regulation and organizational inertia as much as it does on implementation costs or the availability of mature commercial tools.

No new roles. Every technology wave destroys job categories and creates others. The map scores the 342 categories that exist today — not the ones that will exist in five years. “Prompt engineer” doesn’t appear in the BLS taxonomy. “AI agent supervisor” doesn’t either.

And there’s an angle the map ignores entirely: complement dynamics. Here it’s worth citing Kevin A. Bryan, economist at the University of Toronto, who published a direct argument (later endorsed by Marc Andreessen): he’d bet $1,000 that most “susceptible” occupations will see an increased share of the labor market by 2030, not a decreased one. His logic: AI lowers the cost of the tasks those workers perform, which increases demand for the full service that includes those tasks. More demand for automated legal review = more demand for paralegals who know how to use those tools, not less.

It’s not a sure bet — nobody has one. But it reinforces the central point: exposure is not extinction. An occupation scoring 9 is an occupation about to transform, and the difference between benefiting from that transformation or suffering it is how prepared the organization is.

We covered this with concrete data in our analysis of the 95% untapped potential gap: most companies have access to the same AI tools, but only capture a fraction of the available value. Karpathy’s map shows where to look. Capturing the value depends on execution.

Karpathy scored occupations. Our Automation Opportunity Finder scores your actual processes — not generic BLS labels, but the specific workflows in each department of your company. Free, 5 minutes, immediate results.

Frequently Asked Questions

Karpathy's scale rates all 342 Bureau of Labor Statistics occupations from 0 to 10 based on two factors: whether the work output is digital and whether it can be performed remotely. The overall average is 5.3/10. It measures how exposed each occupation is to current AI capabilities, not the likelihood of job replacement.

The highest-scoring occupations include medical transcriptionists (10/10), software developers (8-9), paralegals (8-9), and data analysts (8-9). In contrast, physicians and nurses score 4-5 due to required physical presence. The key factor is the combination of digital output and remote work capability.

No. Exposure measures what AI can do in those tasks today, not job elimination. Most roles scoring 8-9 change in nature rather than vanish: paralegals who spend hours on document review shift to supervising AI tools performing that review. The work transforms — it doesn't disappear.

Apply the two-variable test to every role in your organization: is the output digital? Can it be done remotely? Roles meeting both criteria need priority AI augmentation plans. Separating exposure from replacement is key — a high score means investing in training and tools before cost pressure forces cuts.

Related Articles

We're AI Consultants. Sometimes We Say: Don't Use AI

An AI consultancy telling clients 'skip the AI' sounds contradictory. But it's the most valuable thing we do.

The 100x Employee Already Exists (And Changes How You Hire)

One AI-literate professional now produces what used to take a team. Jensen Huang confirmed it at GTC 2026. Here's what it means for your hiring strategy.