Autonomous Research: What Autoresearch Reveals

Ricardo Argüello — March 8, 2026

CEO & Founder

General summary

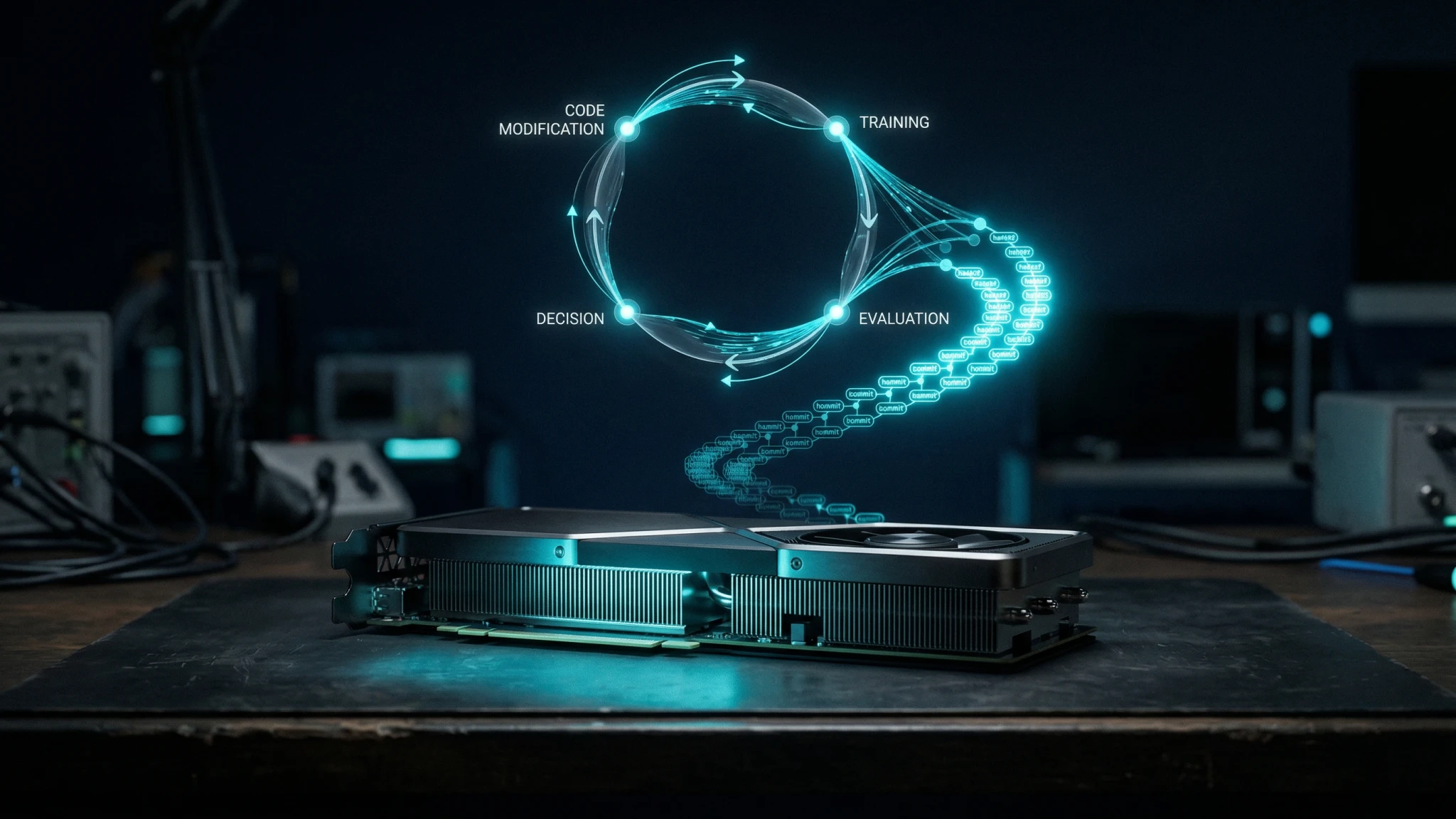

Andrej Karpathy released autoresearch: a 630-line repo where an AI agent modifies code, trains models, and evaluates results in an autonomous loop — 12 experiments per hour, 100 overnight. His next step, SETI@home-style collaborative agent swarms, signals real changes in how enterprises organize technical work.

- Autoresearch runs 12 experiments per hour autonomously — about 100 overnight on a single GPU

- Git serves as the agent's memory: each experiment is a commit, kept if it improves results, discarded if not

- The pattern applies beyond research — any process with a clear measurable metric can use iterate-test-evaluate loops

- Karpathy's next vision: collaborative agent swarms working asynchronously on shared problems

- Limitation: works best with a single, unambiguous metric; most business problems involve multi-variable trade-offs

Imagine setting up a research assistant before you go to bed, and waking up to find it ran 100 experiments, kept the ones that worked, threw out the ones that didn't, and left you a ranked list of results. That's what Karpathy built in 630 lines of code. The bigger idea is that this pattern — set a goal, let the AI iterate, review the results — applies to far more than just research.

AI-generated summary

Karpathy published a repo that runs AI research while he sleeps. Not as a metaphor — he literally configures a file, goes to bed, and wakes up to 100 completed experiments with ranked results.

For those who don’t know him: Andrej Karpathy was Director of AI at Tesla (led the Autopilot vision system), founding member at OpenAI, Stanford PhD, and now founder of Eureka Labs. When he ships something, it’s not a casual weekend project. It’s a signal of where the industry is heading.

I cloned the repo and read the code. It’s 630 lines. That’s it. And what those 630 lines do is more interesting than most research papers I’ve read this year. But the important thing isn’t the code itself — it’s the pattern it demonstrates. Autonomous iterate-test-evaluate loops that already apply to business operations.

What autoresearch actually does

The flow is straightforward. The human writes a file called program.md — high-level instructions on what to research. The agent takes those instructions, modifies train.py, trains a model for 5 minutes, evaluates the validation loss (val_bpb), decides whether the change improved or worsened the result, and repeats. No human intervention.

The numbers: ~12 experiments per hour. ~100 overnight. Each one is a complete LLM training run.

What’s elegant is how it uses git as memory. Every experiment is a commit on a feature branch. If the result improves, the commit stays. If it worsens, it gets discarded. The git history becomes a complete record of what worked and what didn’t — no databases or dashboards needed.

It all runs on a single GPU. Self-contained. As Karpathy put it: “Part code, part sci-fi, and a pinch of psychosis.”

The repo is on GitHub and fully open-source.

The pattern that matters: iterate without waiting

If you abstract what autoresearch does, the pattern is: define objective → agent attempts solutions → measure against metric → keep or discard → repeat.

That pattern already exists in every company. What changes is who occupies each part of the loop. Today, humans sit in the iteration loop — testing configurations, tweaking parameters, running tests, reviewing results. That’s the bottleneck. Not because of a lack of skill, but because a human does one thing at a time and needs to sleep.

What autoresearch demonstrates is a clean separation: the human stays in the direction loop — defines what to research, which constraints apply, which metrics matter. The agent stays in the iteration loop — executes, measures, decides, repeats. Karpathy writes program.md; the agent does everything else.

This is exactly what we see in the agent operator role we’re already implementing with clients. The human doesn’t disappear — they shift position. From executing to directing.

In a recent project, we set up a data validation agent that tested 40 different extraction rules overnight. The team came in the next morning with a ranked list of top performers. What would have taken a week of manual trial-and-error resolved in 8 hours of unsupervised work.

From one agent to a swarm

But Karpathy didn’t stop at a single agent looping. His follow-up post proposes something more ambitious: SETI@home-style collaborative agent swarms. Not one virtual PhD student, but a research community of agents.

The idea: many agents working asynchronously on shared problems. Instead of a single loop, multiple agents adopt different branches, accumulate thousands of commits, and explore the solution space faster than any individual agent.

The quote that stuck with me: “Existing abstractions will accumulate stress as intelligence, attention and tenacity cease to be bottlenecks.” In other words — the tools and processes we use were designed for human-speed output. When that constraint lifts, the tools break.

The concrete example: GitHub’s design assumes human workflows. Master → branch → merge → review. An agent producing thousands of commits across arbitrary structures doesn’t fit that flow. The abstractions strain.

The enterprise parallel is direct. Approval chains, QA processes, reporting cycles — all assume output arrives at human speed. If an agent produces results at 12 per hour, you need batch review and automated quality gates — statistical validation replaces the line-by-line code review that worked at human speed.

And this isn’t a 5-year prediction. autoresearch works today. The collaborative layer is what comes next.

What doesn’t work yet

Let’s be honest. autoresearch optimizes a narrow, clean metric: val_bpb (validation loss in bits per byte). A number goes down = better. No ambiguity.

Most business problems don’t have a single, clean metric. “Improve customer satisfaction” isn’t val_bpb. “Reduce operational costs” involves trade-offs between quality, speed, and compliance that an agent can’t resolve alone.

Agents lack strategic judgment. They optimize the metric you give them, but they don’t understand why that direction matters. An agent that reduces data extraction costs by 30% but introduces errors in regulated fields hasn’t solved anything — it’s created a new problem.

And in regulated industries, “what changed” (a git diff) isn’t enough. You need to explain why each change was made and what risks were evaluated. That still requires humans.

What this means for your operation

Autonomous iteration is production-ready for well-defined problems. If you have a process with a clear metric — order accuracy, extraction precision, response time — an agent can iterate overnight. You don’t need 630 lines of ML code. You need a clear loop: attempt → measure → decide → repeat.

The “direction + constraints” model is how you deploy agents today. Karpathy’s program.md is the template. Define what you want to achieve and what’s off-limits. The agent executes within those boundaries. This is exactly what we describe in the enterprise AI agents playbook — the difference between a useful agent and a dangerous one comes down to the quality of the constraints.

Your tools weren’t built for agent-speed iteration. 100 experiments per night means your version control, approval workflows, and monitoring need to handle that volume. You can’t manually review each result. You need organizational structures that assume continuous output, not discrete deliveries.

Karpathy included a paragraph that reads like science fiction — about “meat computers” that program agents that run research. It sounds like fiction, but autoresearch is the first paragraph of that story being written today.

The business implication isn’t “AI replaces everyone.” It’s that companies that figure out how to direct autonomous iteration loops — with clear metrics, good constraints, and human oversight at the strategic level — will operate at a different speed. The ones still waiting for every cycle to pass through human hands will compete against organizations that iterate 12 times per hour.

If you already have a process with a clear metric and a manual iteration cycle, that’s your candidate. At IQ Source, we define the equivalent of your program.md and set up the loop. Book a 60-minute session and we’ll map it together.

Frequently Asked Questions

It is an open-source repo of about 630 lines where an AI agent autonomously modifies training code, runs 5-minute experiments, evaluates validation loss, and keeps or discards each change. It runs roughly 12 experiments per hour — about 100 overnight — on a single GPU.

Yes, for processes with clear, measurable metrics — data extraction accuracy, error rates, document classification precision. The agent iterates overnight and surfaces ranked options for human review the next morning. Processes without clean metrics still need human judgment in the loop.

Karpathy envisions many agents working asynchronously on shared problems, similar to a SETI@home for research. Instead of one agent looping, many agents adopt different branches, accumulate thousands of commits, and explore the solution space faster than any single agent could.

It works best with a single unambiguous metric. Most business problems involve trade-offs between cost and quality, speed and compliance. Agents lack strategic judgment about why a direction matters, and regulated industries need audit trails that explain reasoning, not just diffs.

Related Articles

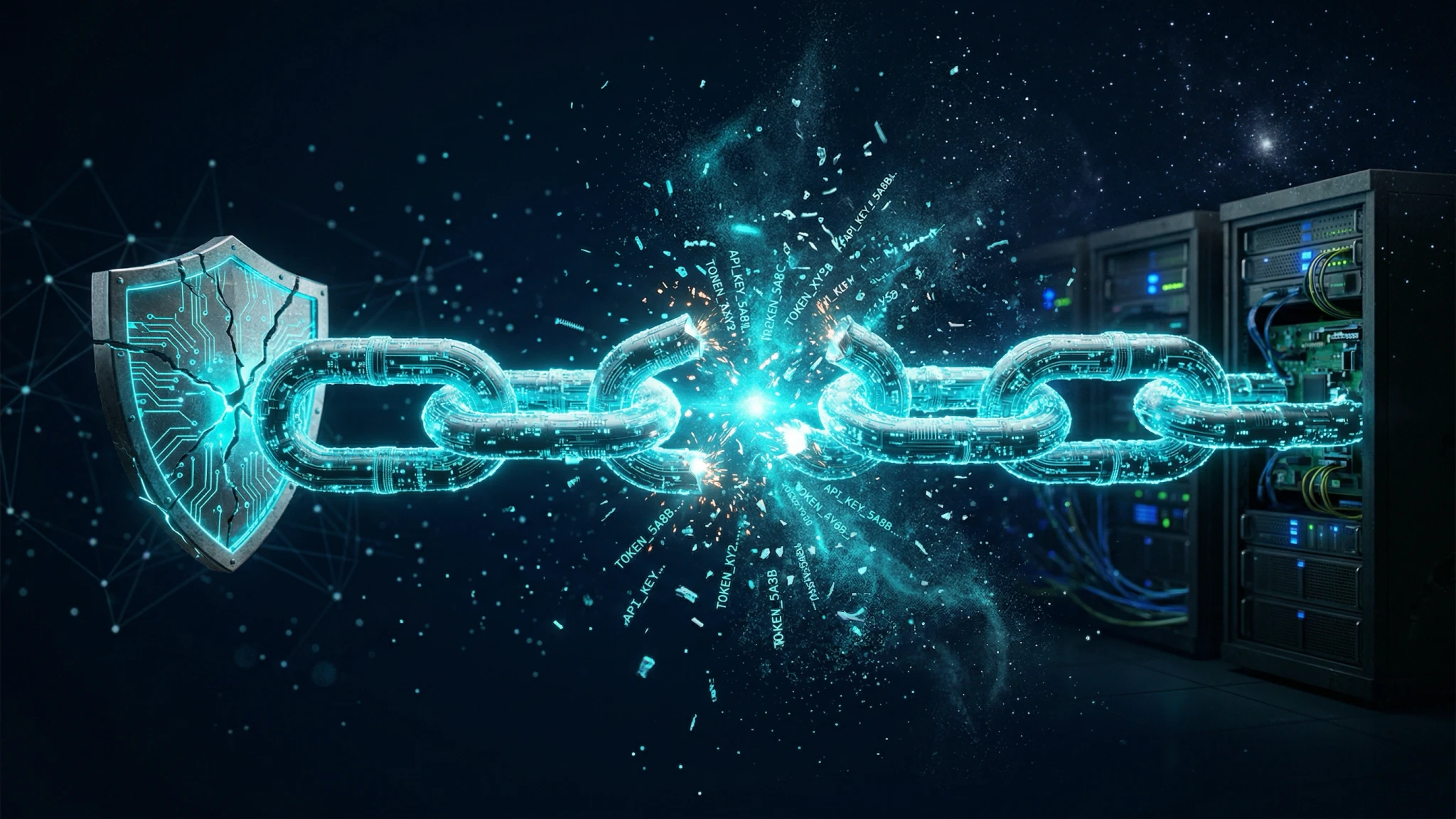

LiteLLM Attack: Your AI Trust Chain Just Broke

LiteLLM, the AI API key proxy with 97 million monthly downloads, was poisoned via PyPI. Your security scanner was the entry point.

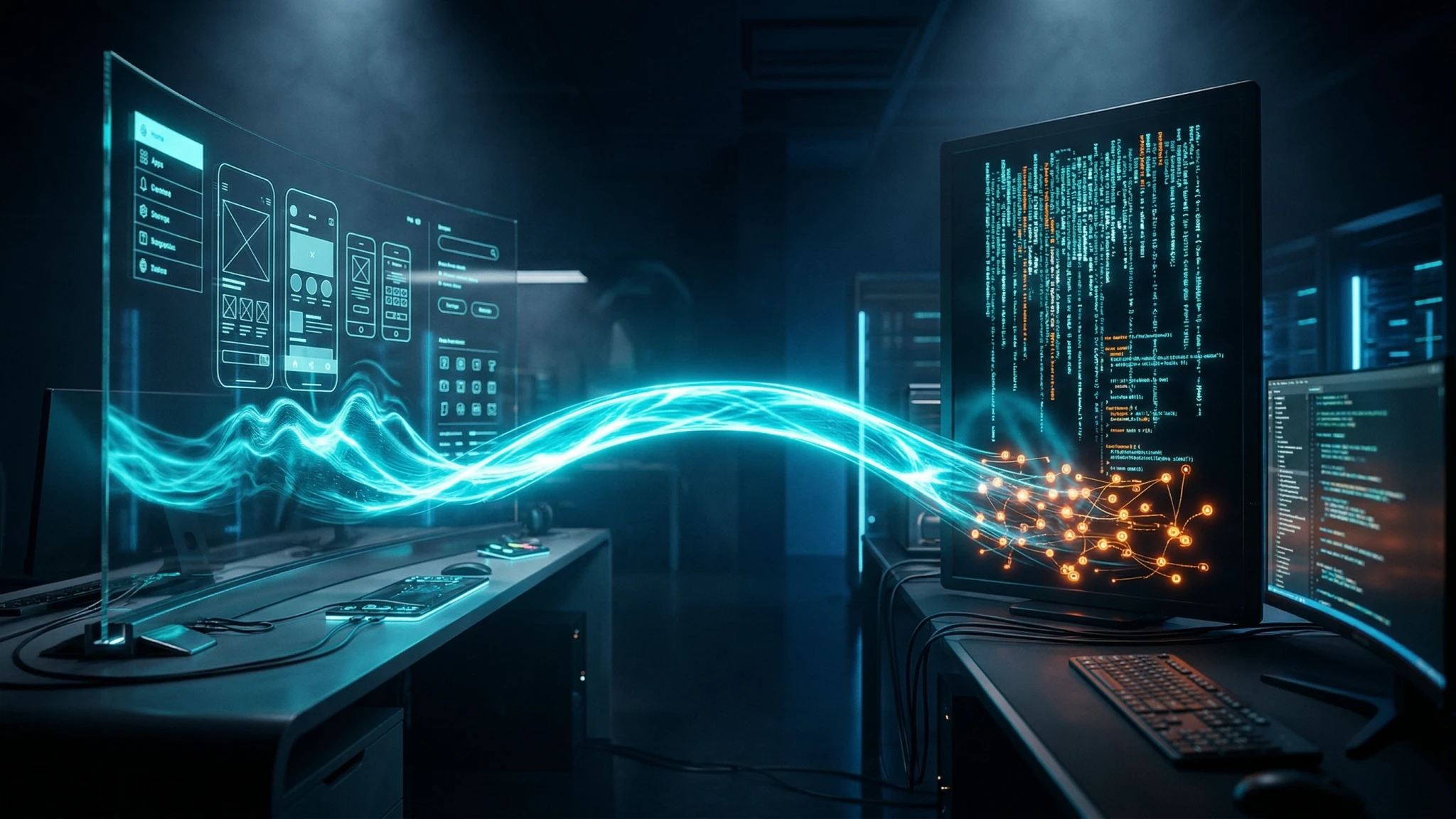

Google Stitch + AI Studio: Design-to-Code Without Engineers

Google shipped a full design-to-production pipeline with Stitch and AI Studio. Where it works for B2B prototypes and where you still need real engineering.